What Is Agentic AI and Why Does It Matter for Developers?

What is agentic AI and why does it matter for developers in 2026? A complete guide covering how agents work, the ReAct loop, MCP, multi-agent architecture, security risks, and practical starting points.

For years, the dominant interaction model with AI was simple: you prompt, it responds. You ask a question, it generates text. You describe a function, it writes the code. The human remains the actor. The AI remains the tool.

Agentic AI breaks that model entirely.

An agentic AI system doesn't just generate output for a human to act on. It pursues goals. It decides what to do next, takes a real action — calling an API, editing a file, browsing a website, running code — observes the result, and decides again. The loop continues until the task is done, the agent gets stuck, or a human steps in.

In 2026, this distinction is no longer theoretical. Agentic AI ships production code, runs literature reviews across millions of papers, manages outbound sales campaigns, controls browsers and computers to complete multi-step tasks, automates customer support workflows, and orchestrates complex business processes — autonomously, end to end.

For developers, this shift is not a distant trend to monitor. It is actively reshaping how software is built, what skills are valued, and what the engineering role looks like in high-performing teams. This guide explains what agentic AI is, how it works, what makes it different from earlier AI tooling, and what it specifically means for developers in 2026 and beyond.

What is agentic AI? A precise definition

Agentic AI describes artificial intelligence systems that pursue goals through their own actions, rather than only producing output for a human to act on.

The word "agentic" comes from agency — the capacity to act on the world. A traditional language model generates plausible text. An agentic system uses that generation as one step inside a larger control flow that includes tool use, memory, planning, and feedback.

The core loop of any agentic system is:

- Perceive — observe the current state of the environment (files, APIs, browser state, conversation history)

- Plan — decompose the goal into steps using chain-of-thought reasoning

- Act — invoke a tool (run code, call an API, read/write a file, search the web)

- Observe — see the result of that action

- Decide — update the plan based on what happened and repeat

This is called a ReAct loop — Reason, Act — and it is the architectural foundation of nearly every agentic system deployed in production today.

The key distinction is the loop itself. A chatbot answers. An agent acts, observes, and adapts — until the job is done.

Agenticness is a spectrum, not a binary. A simple coding assistant that autocompletes your function is not agentic. A system that takes a GitHub issue, checks out the relevant branch, writes a fix, runs the test suite, handles test failures, and opens a pull request — without a human touching the keyboard — is fully agentic. Most tools in 2026 sit somewhere between these poles.

How agentic AI works: the core components

Understanding the architecture helps developers build with it more effectively and reason about its failure modes.

1. The reasoning model (the brain)

At the centre of any agentic system is a large language model — GPT-5, Claude, Gemini, or an open-source equivalent — acting as the reasoning layer. It interprets the goal, decides which tool to use next, generates the inputs for that tool, and processes the output.

What makes frontier models in 2026 capable of agentic work is not just raw capability — it is their ability to maintain context across long multi-step workflows, follow structured instructions reliably, and adapt plans when reality doesn't match expectations.

2. Tools (the hands)

A model alone can reason but not act. Tools are what give agents real-world capability. Common tool types include:

- Code execution — running Python, shell commands, or test suites in a sandboxed environment

- File system access — reading, writing, moving, and deleting files

- Web browser — navigating pages, clicking elements, filling forms

- API calls — querying databases, calling external services, reading documentation

- Web search — retrieving current information beyond the model's training cutoff

- Memory systems — reading from and writing to persistent storage

The set of tools an agent has access to defines its capability surface — and its risk surface. Tools that can modify state (write files, call external APIs, make database changes) are where careful governance matters most.

3. Memory

Agents need to remember context across steps. Four types of memory appear in production agentic systems:

In-context memory — everything within the model's current context window. Temporary, limited to the current session, but immediately accessible.

External (retrieval) memory — a vector database or other retrieval system the agent can query to fetch relevant information. Enables agents to work with knowledge far exceeding what fits in context.

Episodic memory — a record of past actions, observations, and outcomes the agent can reference. Allows learning from previous runs and avoiding repeated mistakes.

Procedural memory — stored instructions, patterns, or workflows the agent knows to follow for specific task types.

4. Orchestration

For complex tasks, a single agent working in one context window is limiting. Multi-agent architectures use an orchestrator — a coordinating agent that decomposes a goal into subtasks and assigns them to specialised sub-agents working in parallel.

Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025, signalling how quickly this architectural pattern is moving from concept to production demand.

Orchestrator Agent

├── Research Agent (web search, document retrieval)

├── Code Agent (writing, running, testing code)

├── Review Agent (quality checks, security scanning)

└── Communication Agent (PR descriptions, stakeholder updates)

Each sub-agent has a focused context and a specialised set of tools. The orchestrator synthesises their outputs into a coherent result.

5. Guardrails

Production agentic systems are not deployed without controls. Permission boundaries define what actions an agent is allowed to take. Approval checkpoints pause execution for human review before high-stakes actions. Audit logs record every action taken for compliance and debugging. Dry-run modes let agents plan without executing, so humans can review the plan before it runs.

This governance layer is not optional. As autonomy increases, so does the need for clear controls — governance is being built directly into agent system design in 2026, not bolted on after deployment.

Agentic AI vs traditional AI tooling: what actually changed

Understanding the contrast makes the significance clearer.

| Dimension | Traditional AI (LLM chat/copilot) | Agentic AI |

|---|---|---|

| Interaction model | Single turn: prompt → response | Multi-turn loop: plan → act → observe → adapt |

| Who acts? | Human acts on AI output | AI acts directly on systems and tools |

| Task scope | Atomic, discrete tasks | Multi-step, long-horizon workflows |

| Statefulness | Stateless (forgets each conversation) | Stateful (maintains context across steps) |

| Tool use | None or single-turn code generation | Active tool invocation (run code, call APIs, modify files) |

| Error handling | Human corrects and reprompts | Agent detects failures and self-corrects |

| Human involvement | Required for every action | Required only at defined checkpoints |

The shift is not incremental. It's architectural. Traditional AI tools are amplifiers — they make human actions faster. Agentic systems are actors — they take actions themselves, with humans in the oversight role rather than the execution role.

Why this matters specifically for developers

1. Your role is shifting from executor to orchestrator

The engineer of 2026 will spend less time writing foundational code and more time orchestrating a dynamic portfolio of AI agents, reusable components, and external services. Their value will lie in designing the overarching system architecture, defining the precise objectives and guardrails for their AI counterparts, and rigorously validating the final output.

This is a move from hands-on keyboard creation to high-level system design, quality assurance, and strategic oversight. The core skill becomes systems thinking, not just syntax.

This doesn't mean writing code is going away — it means the most valuable developer in 2026 is the one who can design systems where AI does the implementation and humans do the design, validation, and governance.

2. Tasks that once took weeks now take hours

The most immediate and tangible impact of agentic AI is on development velocity. Tasks that once required weeks of cross-team coordination can become focused working sessions. Debugging sessions that historically required deep codebase familiarity can be delegated to agents that read the entire codebase, trace the stack, and propose fixes.

Engineers describe developing intuitions for AI delegation over time: they delegate tasks that are easily verifiable — where they can quickly check correctness — or are low-stakes, like quick diagnostic scripts. The more conceptually difficult or design-dependent a task, the more likely engineers keep it for themselves or work through it collaboratively with an agent rather than handing it off entirely.

3. Single-agent vs multi-agent architecture is a real design decision

As you build more complex systems, the architectural choice between single-agent and multi-agent workflows becomes a genuine engineering decision with real tradeoffs:

Single-agent workflows process tasks sequentially through one context window. Simpler to build, debug, and monitor. Better for tasks where full context must be maintained across every step. Limited by context window size and sequential processing speed.

Multi-agent workflows use an orchestrator to coordinate specialised agents working in parallel — each with dedicated context — then synthesise results into integrated output. More powerful for complex, parallelisable tasks. More complex to build, debug, and govern. Context isolation between agents requires careful design of what information flows where.

Most teams should start with single-agent workflows and introduce multi-agent architecture when they hit concrete limitations — not as a default starting assumption.

4. The Model Context Protocol (MCP) is becoming the standard you need to know

MCP — originally developed by Anthropic and now donated to the Linux Foundation's Agentic AI Foundation — is an open standard for connecting AI agents to external tools, data sources, and APIs. Think of it as a universal interface layer: instead of every agent needing custom integration code for every tool, MCP-compatible tools and agents interoperate through a shared protocol.

For developers, MCP matters for two reasons. First, it is becoming the default integration standard for agentic tooling — understanding it is increasingly a baseline expectation for developers building AI-powered systems. Second, organisations that build their agentic workflows on MCP-compatible infrastructure preserve interoperability across models and vendors, reducing the risk of proprietary lock-in.

5. Security and trust boundaries are a first-class engineering concern

Agentic systems introduce a category of security risk that most developers haven't had to reason about before: prompt injection. An adversarial actor can embed instructions in content the agent is expected to process — a web page, a document, an API response — that hijack the agent's behaviour.

When an agent has access to tools that modify state (files, databases, external APIs), prompt injection vulnerabilities have real-world consequences beyond generating bad text. A successfully injected agent could exfiltrate data, make unauthorised API calls, or corrupt files.

Developers building agentic systems need to treat the content an agent processes as untrusted input — the same mental model applied to SQL injection prevention — and design their systems accordingly. Input validation, sandboxed execution environments, least-privilege tool access, and human approval checkpoints for high-stakes actions are the core defences.

6. Evaluation and observability require new approaches

How do you know if your agentic system is working correctly? Traditional monitoring — uptime checks, error rates, response times — is necessary but insufficient. Agentic systems fail in new ways:

- The agent achieves the immediate task but misunderstands the broader goal

- The agent takes a correct action sequence that produces a correct result for the wrong reasons

- The agent gets stuck in an action loop, repeatedly retrying something that will never succeed

- Outputs are locally correct but globally inconsistent across a multi-agent workflow

Developers building agentic systems need to instrument at the step level — logging every tool call, its input, its output, and the agent's interpretation of that output — not just at the request/response level. Evaluation frameworks need test cases that validate the action sequence, not just the final output.

The three phases of agentic AI adoption

Agentic AI will evolve through distinct phases, each demanding different engineering structures, skills, and governance models:

Phase 1: Assistance — AI supports discrete, atomic tasks. Autocomplete, code generation, test writing. Humans remain the primary actors. This is largely where most teams were in 2024–2025.

Phase 2: Augmentation — AI manages multi-step processes and workflows within defined domains. An agent autonomously handles a CI/CD pipeline stage, or takes a ticket from backlog to draft PR. Humans define the goals and review the outputs. This is where leading engineering teams are operating in 2026.

Phase 3: Autonomy — AI operates across domains and makes decisions guided by high-level business objectives. Multi-agent systems coordinate entire product development cycles, customer journeys, or operational workflows with human involvement concentrated in governance and strategy.

Most engineering teams are in transition between Phase 1 and Phase 2 in 2026. The failure mode is trying to jump directly to Phase 3 before the foundation is built.

Practical starting points for developers

You don't need to build a sophisticated multi-agent system to start learning how agentic AI works. Here's a progression that builds genuine capability:

Week 1–2: Understand the loop Build a simple agent from scratch using an SDK like Anthropic's Claude API, OpenAI's API, or an open-source framework like LangChain or LlamaIndex. Give it one tool — web search or code execution — and observe how it plans, acts, and handles failures. Reading the trace of an agent's reasoning is the fastest way to build intuition about where things go wrong.

Month 1: Experiment with existing agentic tools Use GitHub Copilot Workspace, Claude Code, Cursor, or a similar agentic coding tool on a real project. Pay attention to which tasks you delegate successfully and which ones require heavy correction. Build your personal intuition for the delegation boundary.

Month 2–3: Build something purpose-specific Identify a repetitive, multi-step workflow in your own development process — running a specific type of code review, generating test cases for a module, summarising PRs — and build an agent to handle it. Keep the scope narrow. The goal is a working system you can evaluate, not an impressive demo.

Month 3+: Study multi-agent patterns and MCP Once you've built and operated a single-agent system, study the core multi-agent design patterns: ReAct, Reflection, Tool Use, Planning, and Human-in-the-Loop. Read the MCP specification. Understand how orchestrators coordinate sub-agents and how context flows between them.

Frameworks and tools to know:

| Tool/Framework | Use case |

|---|---|

| LangChain / LangGraph | Agent orchestration framework, wide ecosystem |

| LlamaIndex | RAG and retrieval-heavy agent workflows |

| Claude Code | Agentic coding in the terminal, repo-level tasks |

| AutoGen (Microsoft) | Multi-agent conversation frameworks |

| CrewAI | Role-based multi-agent orchestration |

| Anthropic MCP | Open standard for tool/agent interoperability |

| Semantic Kernel | Enterprise-grade agent framework, .NET and Python |

| Prefect / Temporal | Workflow orchestration for long-running agent tasks |

What the data says about where this is going

The scale of agentic AI adoption in 2026 is significant enough that the numbers themselves tell the story:

While nearly two-thirds of organisations are experimenting with AI agents, fewer than one in four have successfully scaled them to production. This gap — between experimentation and scaled production — is 2026's central business challenge in engineering organisations.

McKinsey research reveals that high-performing organisations are three times more likely to scale agents than their peers, but success requires more than technical excellence. The key differentiator is the willingness to redesign workflows rather than simply layering agents onto legacy processes. Organisations that treat agents as productivity add-ons rather than architectural elements consistently fail to scale.

The agentic AI inflection point of 2026 will be remembered not for which models topped the benchmarks, but for which organisations successfully bridged the gap from experimentation to scaled production.

For individual developers, this creates a clear opportunity. The skills required to design, build, evaluate, and govern agentic systems are not yet commoditised. Engineers who build genuine expertise in agentic architectures — not just prompt engineering, but system design, tool integration, multi-agent coordination, and governance — are positioned ahead of a wave that is only beginning to reach production scale.

The honest assessment: what agentic AI can and cannot do

What it does well today:

- Long-horizon coding tasks with clear success criteria (write this feature, fix this bug, write tests for this module)

- Research and synthesis across large document sets

- Repetitive multi-step workflows with structured inputs and outputs

- Code review, security scanning, and documentation generation at scale

- Workflow coordination across multiple systems when APIs are available

Where it still struggles:

- Tasks requiring deep contextual judgment that isn't captured in the system prompt or available tools

- Novel problem-solving where the right approach isn't discoverable through tool use alone

- Workflows where failure modes are expensive and human review at every step is required

- Tasks requiring genuine creativity or taste — design decisions, product strategy, relationship management

The most effective developers in 2026 are not those who maximise AI autonomy. They're the ones who accurately identify which tasks are well-suited for delegation, design the right guardrails for those tasks, and keep the genuinely hard problems for themselves.

Frequently asked questions

What is the difference between agentic AI and a chatbot? A chatbot responds to prompts — it generates output for a human to act on. Agentic AI takes actions itself: calling APIs, running code, modifying files, browsing the web, and coordinating across tools. The line is whether the system acts in a loop on real systems, not whether it sounds sophisticated.

Is agentic AI the same as autonomous AI? They're related but not identical. Autonomy is one dimension of agenticness — how much the system acts without human approval. An agentic system can be highly autonomous (acts end-to-end without checkpoints) or partially autonomous (pauses for human review at defined stages). Most production agentic systems in 2026 sit somewhere in the middle, with autonomy calibrated to the risk level of the actions involved.

What skills do developers need to build agentic AI systems? Beyond standard software engineering, the most important skills are: understanding of LLM capabilities and limitations, tool integration and API design, multi-agent orchestration patterns, evaluation framework design, and security reasoning around untrusted inputs and prompt injection. Systems thinking — designing how components interact rather than just writing component logic — becomes the primary skill.

What is MCP and why do developers need to know it? The Model Context Protocol is an open standard for connecting AI agents to external tools, data sources, and APIs. Developed by Anthropic and now maintained by the Linux Foundation, it is becoming the default integration standard for agentic tooling. Developers building agentic systems should understand it to ensure their systems remain interoperable across models and vendors.

How is agentic AI different from traditional automation? Traditional automation executes predefined, rigid workflows — if condition A, do action B. Agentic AI can handle ambiguity, adapt when steps fail, use judgment to select between approaches, and generalise to tasks that weren't explicitly programmed. The trade-off is that agentic systems are less predictable than traditional automation and require more careful governance.

Are agentic AI systems reliable enough for production? For well-scoped tasks with clear success criteria and appropriate guardrails, yes — leading engineering teams are running agentic systems in production today. For tasks requiring high reliability across edge cases, or where failures have severe consequences, agentic systems typically need human review steps integrated into the workflow. Reliability is improving rapidly but is not yet uniform across task types.

How should teams govern agentic AI systems? Build governance in from the start, not as an afterthought. Define explicit permission boundaries for what tools the agent can use. Implement approval checkpoints before high-stakes actions. Maintain detailed audit logs of every action taken. Use least-privilege access — agents should have access only to the tools and data their specific task requires. Plan for failure: what happens when an agent gets stuck, takes a wrong action, or encounters an unexpected system state?

Iria Fredrick Victor

Iria Fredrick Victor(aka Fredsazy) is a software developer, DevOps engineer, and entrepreneur. He writes about technology and business—drawing from his experience building systems, managing infrastructure, and shipping products. His work is guided by one question: "What actually works?" Instead of recycling news, Fredsazy tests tools, analyzes research, runs experiments, and shares the results—including the failures. His readers get actionable frameworks backed by real engineering experience, not theory.

Share this article:

Related posts

More from AI

May 4, 2026

1780% of AI projects fail. Not because the technology is broken — because companies keep making the same predictable mistakes. Here's what they are and how to avoid them.

May 2, 2026

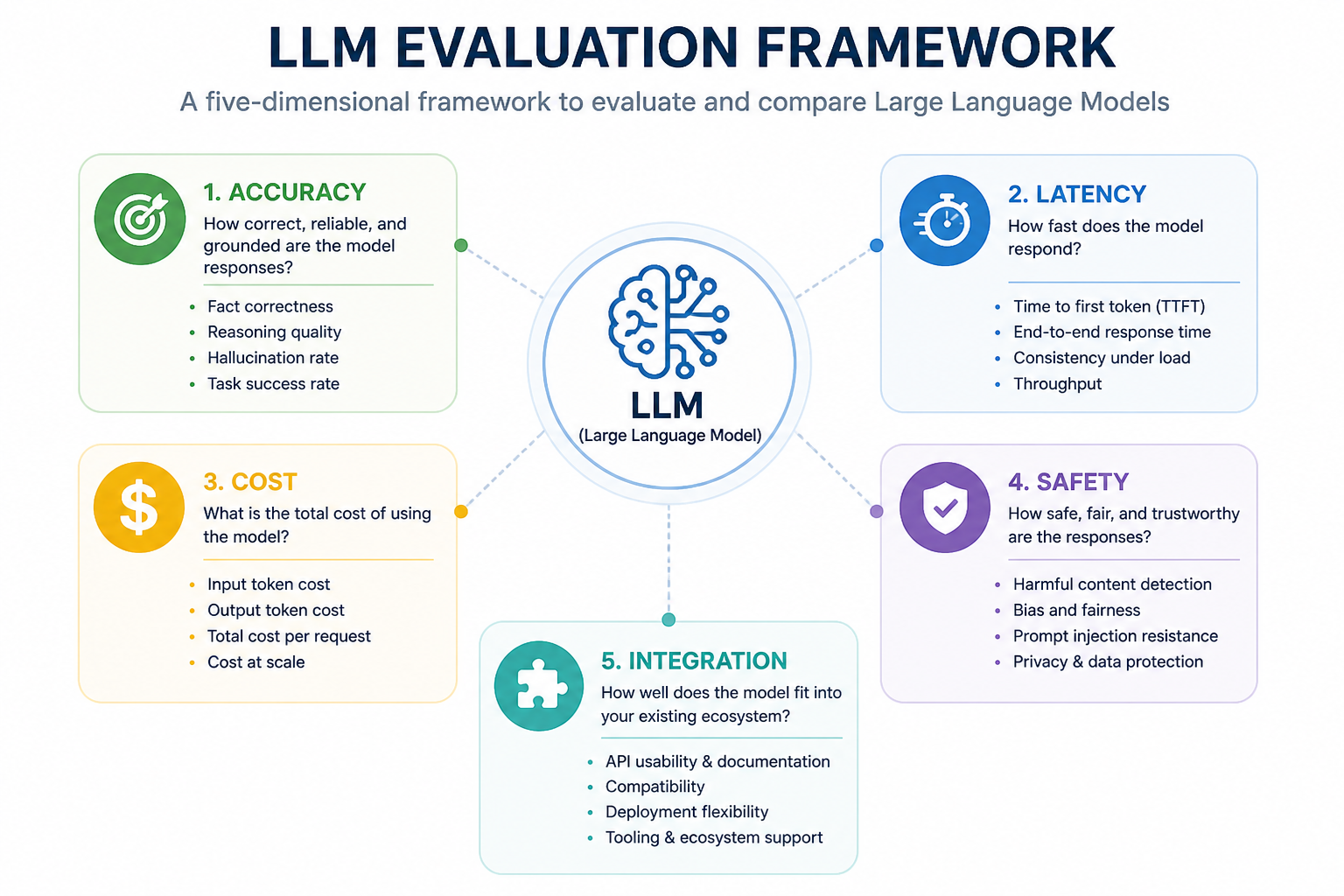

45Not all LLMs are equal in production. Learn how to evaluate large language models for accuracy, speed, cost, safety, and fit — before you commit to one.

April 29, 2026

32AI doesn't sound like you because you haven't taught it to. Here's the real reason — and how to fix it.