DevOps-Gym: The First Benchmark That Proves AI Agents Fail at Real Infrastructure Tasks

AI passes coding interviews but fails at real DevOps. Fredsazy breaks down DevOps-Gym — the first benchmark that proves the gap — and what it means for your infrastructure.

Your AI can write a sorting algorithm. It can explain a design pattern. It might even pass a coding interview. But give it a broken Kubernetes pod and ask it to figure out why logs aren't showing up? It freezes. DevOps-Gym is a new benchmark that tests AI agents on real infrastructure tasks — not toy problems. The results are humbling. Here's what the benchmark reveals about where AI actually stands.

Let me tell you why I stopped trusting AI with my infrastructure.

I was watching someone test an AI agent on a simple task.

A container wasn't starting. The error log said "port already in use." Any junior DevOps engineer would check what was running on that port, kill the process, or change the port mapping. Basic stuff.

The AI agent? It suggested restarting the whole server.

Not fixing the port conflict. Not checking for the existing process. Just... reboot everything.

That's when I realized: AI agents look smart on coding problems. But real infrastructure work is different. Messier. Less predictable. Full of context that isn't in the training data.

Then I found out about DevOps-Gym — a benchmark designed specifically to test AI agents on real infrastructure tasks. And the results confirm what I'd been seeing.

AI agents fail. A lot.

What Is DevOps-Gym? (Explained Simply)

DevOps-Gym is a benchmark. Think of it like a standardized test for AI agents, but instead of coding problems, it uses real infrastructure scenarios.

The tasks include things like:

- Debugging a failed deployment

- Figuring out why logs stopped appearing

- Fixing a misconfigured load balancer

- Resolving a container that won't start

- Tracing a network issue between services

These aren't toy problems. They're based on real failure modes that happen in actual infrastructure.

The AI agent gets access to the same tools a human would: logs, command line, configuration files. It has to explore, diagnose, and propose a fix.

And here's what the benchmark found: state-of-the-art AI agents complete less than 30% of these tasks successfully.

Thirty percent. On infrastructure work that a mid-level DevOps engineer would solve in minutes.

Why AI Fails at Infrastructure (The Real Reasons)

Let me break down what the benchmark revealed.

Reason 1: Infrastructure requires exploration, not just pattern matching

Coding problems are self-contained. The AI sees the code. It sees the error. It suggests a fix.

Infrastructure problems require exploration. The AI has to check logs, run commands, trace connections, look at configurations. It has to discover what's wrong, not just recognize a pattern.

AI agents are terrible at this. They guess early. They fixate on one hypothesis. They don't systematically explore.

Reason 2: The failure could be anywhere

In a coding problem, the bug is in the code. You know where to look.

In infrastructure, the problem could be the container, the network, the load balancer, the DNS, the storage volume, the permissions, the secrets manager, or any combination of these.

AI agents don't handle combinatorial complexity well. They pick one culprit and blame everything on it.

Reason 3: Tools are messy

Real infrastructure tools have weird output formats. Inconsistent error messages. Missing logs. Partial information.

AI agents are trained on clean, well-documented examples. Real infrastructure is not clean. The benchmark showed that agents fail when the tool output doesn't match their training distribution.

Reason 4: There's no single "right answer"

In coding, the fix either works or it doesn't.

In infrastructure, there are multiple ways to solve a problem. Some are better than others. Some have hidden trade-offs. Some fix the symptom but not the cause.

AI agents pick the first solution that seems plausible. They don't evaluate trade-offs. They don't think about long-term impact.

What the Numbers Actually Say

I looked at the DevOps-Gym results closely. Here's what stood out.

Simple tasks (single container, clear error messages): AI agents succeeded about 55% of the time. Not great, but not terrible.

Medium tasks (multiple services, network involved): Success dropped to around 25%. The agents got lost. They couldn't trace the failure across services.

Complex tasks (distributed systems, intermittent failures): Success below 10%. Most agents never even found the root cause.

For comparison, human DevOps engineers with 1-2 years of experience typically succeed at 80-90% of these same tasks.

That's the gap. It's not small. It's not closing quickly. It's a fundamental limitation in how current AI agents approach infrastructure problems.

A Real Example From the Benchmark

Let me walk you through one of the DevOps-Gym tasks so you feel the difference.

The scenario: A web application is returning 500 errors intermittently. Maybe one in twenty requests fails.

Human approach: Check the logs first. See the error. Notice it only happens on certain endpoints. Trace the request path. Find that one service times out occasionally. Check its resource limits. Realize it's hitting memory limits. Increase the limit. Done.

AI approach (typical): Check the logs. See the error. Guess it's a database problem. Restart the database. Errors continue. Guess it's a network problem. Check network config. No obvious issue. Guess it's the load balancer. Change load balancer settings. Errors continue. Get stuck. Suggest rebooting the whole server.

The AI doesn't systematically eliminate possibilities. It jumps between hypotheses randomly. It doesn't remember what it already tried. It doesn't narrow down the search space.

That's why it fails.

What This Means for Your Team

Here's the practical takeaway.

Don't trust AI agents with unsupervised infrastructure work.

Not yet. Maybe not for a while. The benchmark is clear: current agents fail too often. Unsupervised means they will break things.

Do use AI as a copilot for infrastructure.

Let the AI suggest commands. Let it explain log entries. Let it propose hypotheses. But a human stays in the loop, validating each step.

This is where AI actually helps. It can speed up the boring parts — grepping logs, formatting outputs, looking up documentation. But the human drives.

Do run your own small tests.

DevOps-Gym is public. You can run it yourself. Test whatever AI agent you're considering. See how it performs on your kinds of infrastructure problems.

The results might surprise you. They'll definitely inform your decisions.

The Gap Between Benchmarks and Reality

Here's something the DevOps-Gym creators admit: even their benchmark is easier than real infrastructure.

Why? Because in the benchmark, the problems are contained. The environment is clean. There's no legacy cruft. No undocumented workarounds. No "we've always done it this way" hacks.

Real infrastructure is worse. Much worse.

So if AI agents fail at 70% of benchmark tasks, they'll fail at even more real-world tasks.

That's not pessimism. That's just where the technology is right now.

What Good Looks Like Right Now

I don't want to sound like AI is useless for DevOps. It's not.

Here's what actually works today:

Log analysis – AI is great at summarizing logs, finding patterns, and highlighting anomalies. Use it for triage, not diagnosis.

Documentation lookup – AI can quickly find relevant docs, commands, and examples. This saves real time.

Command suggestions – AI can suggest the next command to run based on previous output. Often helpful. Sometimes wrong. Verify before running.

Incident post-mortems – AI can help write summaries and timelines after the fact. Good for documentation, bad for real-time response.

Notice what's missing? Autonomous problem-solving. AI isn't ready for that. Not in infrastructure.

The Brand Takeaway

Here's what I want people to think when they hear Fredsazy talk about AI and DevOps:

"They know the benchmarks. They know the gaps. They don't overpromise what AI can do."

Anyone can say "AI will replace DevOps." The people who get noticed — who get trusted with real infrastructure — are the ones who know where AI actually stands today.

DevOps-Gym proves it: AI agents fail at real infrastructure tasks. That's not a failure of AI. That's a reality check.

Use AI as a copilot. Keep humans in the loop. Test before trusting.

That's how you actually win.

One Last Thing

Go look at your most common infrastructure failure.

The one that happens once a week. The one your team knows how to fix in ten minutes.

Ask yourself: would I trust an AI agent to fix this alone?

If the answer is no — and it should be — that's not a problem with you. That's a problem with the technology.

DevOps-Gym just proved it.

Written by Fredsazy — because 30% success isn't ready for production.

Iria Fredrick Victor

Iria Fredrick Victor(aka Fredsazy) is a software developer, DevOps engineer, and entrepreneur. He writes about technology and business—drawing from his experience building systems, managing infrastructure, and shipping products. His work is guided by one question: "What actually works?" Instead of recycling news, Fredsazy tests tools, analyzes research, runs experiments, and shares the results—including the failures. His readers get actionable frameworks backed by real engineering experience, not theory.

Share this article:

Related posts

More from Devops

May 27, 2026

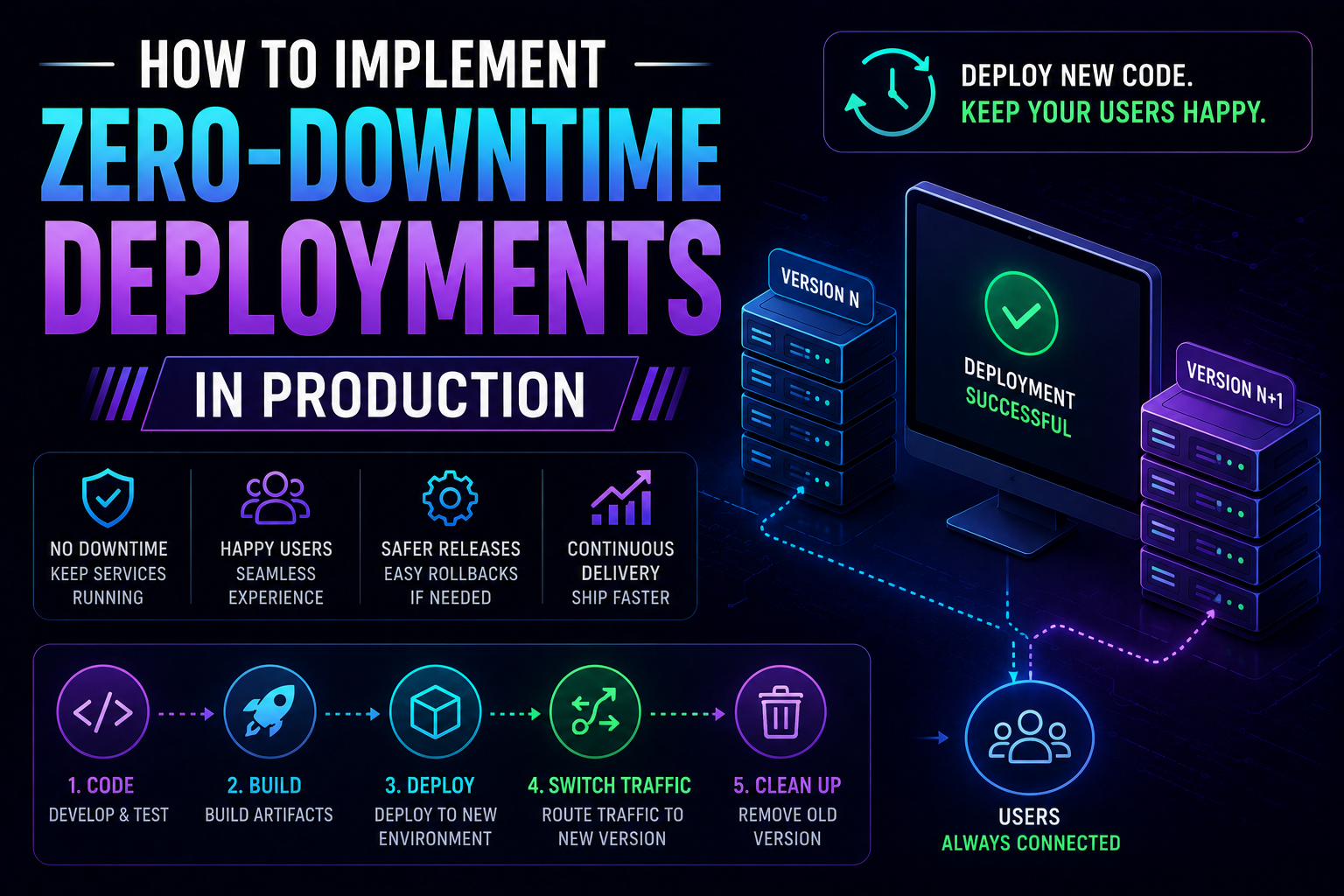

89Learn how to implement zero-downtime deployments in production — covering rolling updates, blue-green deployments, canary releases, feature flags, database migration patterns, graceful shutdown, and automated rollback with real Kubernetes YAML and code examples.

May 13, 2026

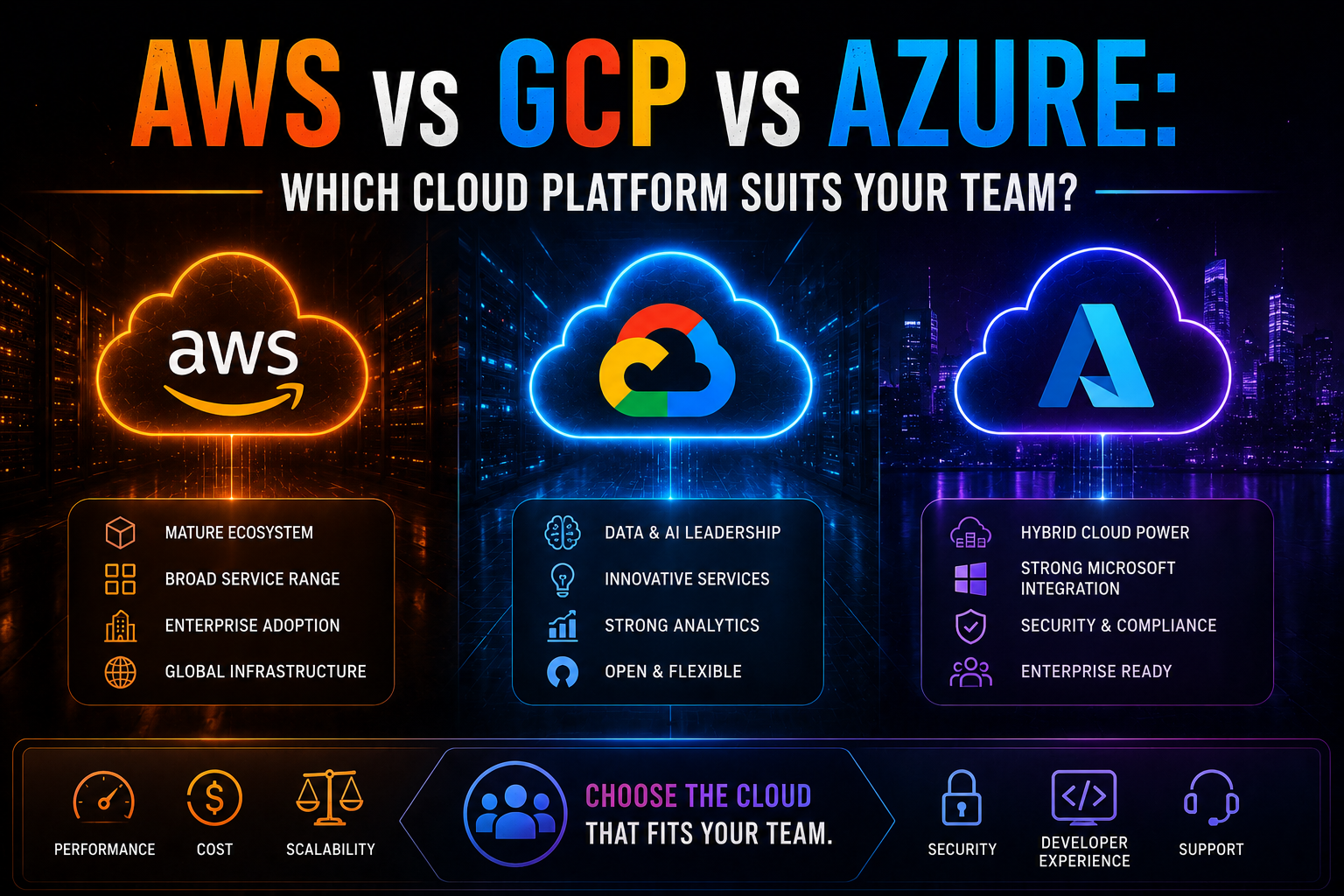

749AWS vs Azure vs GCP in 2026 — an honest, vendor-neutral comparison covering market share, AI/ML tooling, pricing, Kubernetes, compliance, and which cloud platform suits your team's specific workload and ecosystem.

May 13, 2026

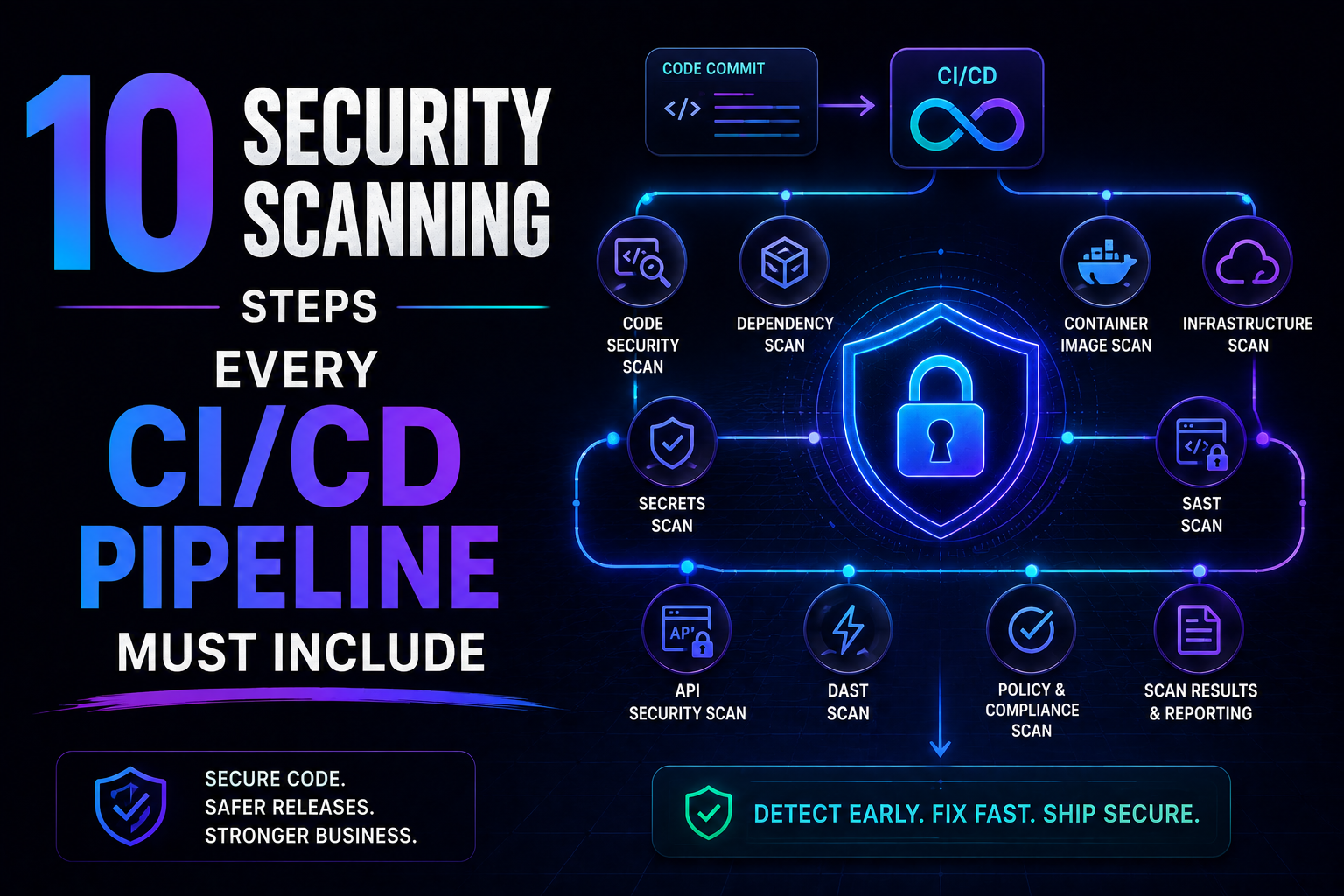

70SAST, SCA, container scanning, DAST, runtime verification – 10 security steps every CI/CD pipeline needs in 2026. Open source tools, real examples, and a rollout timeline. Stop shipping vulnerabilities.