AWS vs GCP vs Azure: which cloud platform suits your team?

AWS vs Azure vs GCP in 2026 — an honest, vendor-neutral comparison covering market share, AI/ML tooling, pricing, Kubernetes, compliance, and which cloud platform suits your team's specific workload and ecosystem.

Choosing a cloud provider is one of the most consequential infrastructure decisions a team makes. It shapes your hiring pool, your operational costs, your developer experience, and in some cases your product's performance ceiling. And unlike most technology decisions, it's genuinely difficult to reverse once you've built on top of it.

The cloud infrastructure market crossed $419 billion in 2025 and is on track to exceed $800 billion globally by end of 2026. The three hyperscalers — AWS, Azure, and GCP — command a combined 68% of that market, and the gap between them and every other provider is growing. 89% of enterprises now use two or more cloud providers, but most workloads still live on a primary platform. Getting that primary choice right matters.

In 2026, the honest answer is that all three platforms are excellent. The services are broadly comparable, the global infrastructure is mature, the SLAs are nearly identical (99.95–99.99% uptime), and the pricing is within a similar range. The real differences — the ones that should actually drive your decision — are more specific: ecosystem fit, team expertise, AI/ML tooling, pricing model transparency, and which platform genuinely excels for your specific workload type.

This article cuts through the marketing fog. No affiliate links, no vendor bias. Just a straight comparison based on what each platform does well, where it falls short, and which team should choose which.

The current state of the cloud market in 2026

AWS holds approximately 31% of the global cloud market in 2026, followed by Azure at 25% and GCP at 12%. However, Azure is growing faster in enterprise adoption, and GCP leads in AI/ML services growth.

Key Q1 2026 developments: AWS launched Trainium3 instances (3x faster than Trainium2 for AI training), Azure integrated GPT-5 natively into all enterprise services, and GCP cut compute pricing by 8% across all regions.

These moves tell you something important about each platform's strategic direction in 2026: AWS is doubling down on AI compute infrastructure, Azure is deepening its OpenAI integration for enterprise customers, and GCP is competing aggressively on price while continuing to lead on Kubernetes and data analytics tooling.

AWS: the incumbent, the default, the everything store

Amazon Web Services launched in 2006 — 18 years ahead of most of its current competitors in meaningful ways — and has spent those years building the broadest and deepest cloud service catalogue in the industry.

AWS holds ~31% market share and leads on service breadth — 200+ managed services, largest partner ecosystem, and the most community resources.

What AWS does best:

Service breadth and depth. If a cloud service exists, AWS probably has it. And if AWS has it, it's usually the most mature version — with the most configuration options, the most integrations, and the most documentation. This is AWS's deepest moat.

The talent pool. More engineers have AWS experience than Azure or GCP combined. If you're hiring, AWS certifications are the most common and the most widely understood. This is an underappreciated operational advantage — a team that already knows AWS will be productive from day one.

The partner ecosystem. The AWS Marketplace has over 12,000 products. Most third-party software, security tools, monitoring platforms, and infrastructure tools have native AWS integrations. You're rarely the first person to solve a problem on AWS.

Compute variety. From tiny Lambda functions to massive GPU clusters for AI training, AWS has the widest selection of compute options. The Trainium3 and Inferentia chips launched in early 2026 make AWS increasingly competitive for AI workload costs against NVIDIA-based alternatives.

Where AWS falls short:

Complexity. AWS's depth is also its curse. There are often five ways to do the same thing (EC2 vs ECS vs EKS vs Fargate vs Lambda), and choosing well requires experience. New teams frequently over-provision, under-optimise, or build on the wrong service for their use case.

Cost management. AWS billing is notoriously complex. The pricing model for some services — data transfer, cross-AZ traffic, NAT gateways — creates unexpected bills that punish teams who don't monitor costs obsessively from the start. AWS Cost Explorer helps, but the underlying pricing complexity doesn't go away.

UI and documentation quality. AWS's console is functional but not beautiful, and documentation quality is inconsistent across services. Newer services often have sparse documentation until the community catches up.

Pricing snapshot (US East, May 2026):

- EC2 t3.medium (2 vCPU, 4GB RAM): ~$0.0416/hour

- S3 standard storage: $0.023/GB/month

- Data transfer out: $0.09/GB (first 10TB/month)

- RDS MySQL db.t3.medium: ~$0.068/hour

AWS is the right choice for:

- Teams without a strong existing cloud preference who want maximum optionality

- Applications requiring specialised AWS services with no equivalent elsewhere

- Regulated industries where AWS's compliance certifications (the broadest in the industry) matter

- Teams that need access to the largest pool of experienced cloud engineers

- High-volume AI inference workloads using Trainium/Inferentia custom silicon

Azure: the enterprise platform, the Microsoft ecosystem play

Microsoft Azure launched in 2010 and has grown into the enterprise cloud of choice for organisations already invested in the Microsoft ecosystem. Its growth story in 2026 is inseparable from two things: its deep integration with Microsoft 365, Active Directory, and the broader enterprise software stack — and its exclusive partnership with OpenAI.

Azure is at ~23–25% and growing fastest, driven by Microsoft 365 integration, an exclusive OpenAI partnership, and the most compliance certifications of any provider.

What Azure does best:

Microsoft ecosystem integration. If your organisation runs Microsoft 365, uses Active Directory for identity, has existing Windows Server infrastructure, or runs SQL Server or .NET applications, Azure is the path of least resistance by a significant margin. Azure Active Directory (now Microsoft Entra ID), hybrid connectivity via Azure Arc, and seamless SSO across the Microsoft stack eliminate integration work that would be substantial on any other provider.

Enterprise AI with OpenAI. Azure's exclusive commercial partnership with OpenAI is a genuine differentiator in 2026. Azure OpenAI Service gives enterprise customers access to GPT-4o, GPT-4, and DALL-E with enterprise-grade security controls, data residency guarantees, private networking, and compliance features that the public OpenAI API doesn't provide. Azure integrated GPT-5 natively into all enterprise services in Q1 2026 — making it the default choice for enterprises that want to use frontier OpenAI models with data governance.

Compliance and governance. Azure has the most compliance certifications of any cloud provider — over 100 certifications covering GDPR, HIPAA, FedRAMP, ISO 27001, SOC 2, and dozens of industry and regional certifications. For regulated industries — healthcare, financial services, government — this reduces compliance burden substantially.

Hybrid cloud leadership. Azure Arc extends Azure management, policy, and security to on-premises infrastructure, other clouds, and edge environments. For enterprises with significant on-premises investment that can't migrate everything immediately, Azure's hybrid story is the most mature of the three providers.

Where Azure falls short:

Pricing complexity and cost predictability. Azure's enterprise-focused services and layered pricing can make it challenging to predict costs or move quickly. Azure Reserved Instances and licensing discounts can deliver substantial savings, but navigating the licensing model — particularly the interaction between Microsoft 365 licenses and Azure services — is genuinely complex.

Developer experience outside the Microsoft stack. Azure is excellent for .NET, C#, and Windows-native workloads. For teams running Python, Go, Node.js on Linux, the experience is perfectly functional but historically less polished than AWS or GCP. This gap has narrowed in recent years but hasn't entirely closed.

Service naming. Azure's service naming conventions are famously inconsistent — Azure Container Apps, Azure Container Instances, Azure Kubernetes Service, and Azure App Service all handle containers in different ways for different use cases, and the boundaries between them are not always intuitive.

Pricing snapshot (East US, May 2026):

- D2s v3 (2 vCPU, 8GB RAM): ~$0.096/hour

- Azure Blob Storage: $0.018/GB/month

- Data transfer out: $0.087/GB (first 10TB/month)

- Azure SQL Database (General Purpose, 2 vCores): ~$0.362/hour

Azure is the right choice for:

- Organisations already running Microsoft 365, Active Directory, or significant .NET/Windows infrastructure

- Enterprises requiring frontier OpenAI models (GPT-4o, GPT-5) with enterprise data governance

- Highly regulated industries where compliance certification breadth matters

- Enterprises with hybrid cloud requirements bridging on-premises and cloud

- Teams using Microsoft's developer tooling (Visual Studio, GitHub Enterprise, DevOps)

GCP: the engineering-forward platform, the data and AI specialist

Google Cloud Platform launched in 2008 and has long been the underdog in the hyperscaler race — a technology-first platform with an extraordinary infrastructure foundation, best-in-class data tooling, and a Kubernetes experience that's simply better than the competition, because Google invented Kubernetes and runs it at greater scale than anyone.

GCP holds ~11–12% but is the fastest-growing by percentage, with best-in-class Kubernetes (GKE), BigQuery for data analytics, and Google's private global network backbone.

What GCP does best:

Kubernetes and container infrastructure. Google invented Kubernetes. Google Kubernetes Engine (GKE) is the most mature, most feature-complete managed Kubernetes service in the industry — with Autopilot mode that removes the need to manage node pools entirely, automatic node provisioning, and built-in cost optimisation. For teams running containerised workloads at scale, GKE is the standard against which EKS and AKS are measured.

Data analytics and BigQuery. BigQuery is GCP's crown jewel. It's a serverless, massively parallel analytics database that can query petabytes of data in seconds without any infrastructure provisioning. For data engineering, analytics engineering, and business intelligence workloads, BigQuery is the fastest and most operationally simple large-scale analytics option available. dbt integrates natively with BigQuery and is the standard data transformation tool in GCP data stacks.

Google's private network backbone. GCP's primary differentiator is its private subsea fiber network, which routes traffic away from the public internet to provide more consistent global latency. For globally distributed applications, this translates to more consistent performance and lower latency variance between regions — measurable in benchmarks and felt in production.

AI/ML infrastructure. Google's Tensor Processing Units (TPUs) remain the most cost-effective hardware for training large language models and running large-scale ML inference at the TPU-scale workload size. Vertex AI is GCP's unified ML platform — from data preparation through training, deployment, and monitoring. For teams training custom models, GCP's AI infrastructure is the most technically sophisticated option.

Pricing. GCP is typically 5–10% cheaper for compute. GCP also introduced sustained use discounts automatically — unlike AWS Reserved Instances and Azure Reserved VM Instances, which require upfront commitment, GCP applies discounts automatically when you use a resource for more than 25% of a month. GCP cut compute pricing by 8% across all regions in Q1 2026, extending its price competitiveness further.

Where GCP falls short:

Market share and ecosystem. At 12% market share, GCP has fewer third-party integrations, fewer community resources, and a smaller pool of engineers with deep GCP experience than AWS or Azure. This is less of a concern for technical teams but matters for hiring and vendor support.

Enterprise sales and support. Google's historical strength is engineering, not enterprise sales. AWS and Azure have larger field sales organisations, more pre-sales technical resources, and more mature enterprise support structures. GCP has improved significantly in recent years — particularly after winning several marquee enterprise contracts — but the gap in enterprise support maturity still exists.

Service stability history. GCP has a historical reputation — partly fair, partly exaggerated — for deprecating services without long notice periods. Google Stadia, Google Cloud IoT Core, and several other products were deprecated in ways that left customers scrambling. GCP has published clearer deprecation policies since 2023, but the perception lingers.

Pricing snapshot (US Central, May 2026):

- N2 n2-standard-2 (2 vCPU, 8GB RAM): ~$0.097/hour

- Cloud Storage standard: $0.020/GB/month

- Data transfer out: $0.08/GB (first 1TB free/month)

- Cloud SQL MySQL (db-n1-standard-2): ~$0.122/hour

GCP is the right choice for:

- Data-heavy workloads — analytics, data warehousing, ML pipelines — where BigQuery is the centre of the stack

- Teams running containerised workloads at scale who want the best Kubernetes experience

- AI/ML teams training custom large-scale models who need TPU access

- Startups that value GCP's aggressive pricing and startup credits

- Global consumer applications where network latency consistency matters

- Engineering-forward teams who value technical excellence over ecosystem breadth

Head-to-head comparison table

| Dimension | AWS | Azure | GCP |

|---|---|---|---|

| Market share (2026) | ~31% | ~25% | ~12% |

| Market share trend | Stable | Growing fastest | Fastest % growth |

| Service breadth | 200+ services | 200+ services | 150+ services |

| Best-in-class strength | Breadth, ecosystem, compute variety | Microsoft integration, OpenAI, compliance | Kubernetes, BigQuery, networking, pricing |

| AI/ML speciality | Broadest GPU selection, SageMaker | OpenAI/GPT-5 integration, Azure AI | TPUs, Vertex AI, custom model training |

| Kubernetes | EKS (solid) | AKS (solid) | GKE (best in class) |

| Managed database | RDS, Aurora, DynamoDB | Azure SQL, Cosmos DB | Cloud SQL, Spanner, Bigtable |

| Serverless | Lambda (most mature) | Azure Functions | Cloud Functions, Cloud Run |

| Compute pricing | Baseline | ~5% higher | ~5–10% cheaper |

| Data egress cost | $0.09/GB | $0.087/GB | $0.08/GB |

| Compliance certs | 100+ | 100+ (most) | 60+ |

| Hybrid cloud | Outposts (good) | Arc (excellent) | Anthos (strong) |

| Free tier | 12 months + always free | 12 months + always free | $300 credit + always free |

| Talent availability | Highest | High | Lower |

| Documentation quality | Variable | Good | Generally excellent |

| Enterprise support | Excellent | Excellent | Good (improving) |

AI and ML in 2026: the dimension that changed everything

The most significant shift in the cloud comparison in 2026 is the AI/ML layer. All three providers have made aggressive bets, and the differences are material:

AI/ML is the biggest differentiator in 2026: Azure for OpenAI/GPT, GCP for TPUs and BigQuery ML, AWS for the broadest GPU selection and SageMaker ecosystem.

AWS for AI: The broadest GPU selection (NVIDIA H100, H200, Trainium3, Inferentia2), the widest variety of foundation models through Amazon Bedrock (Anthropic Claude, Meta Llama, Cohere, Mistral, and more), and SageMaker as the most feature-complete MLOps platform. AWS is the most flexible AI platform — you can bring any model and run it on any hardware configuration. The trade-off: more choices means more decisions.

Azure for AI: The exclusive OpenAI partnership remains Azure's defining AI advantage. Enterprise customers who want to run GPT-4o or GPT-5 with private networking, data residency controls, and enterprise security cannot get that from any other cloud provider. Azure AI Studio provides a unified development environment for building, fine-tuning, and deploying AI applications. For teams building products on top of OpenAI models, Azure is the only enterprise-grade path.

GCP for AI: Google's TPU v6 is the most cost-effective hardware for training transformer-based models at scale. Vertex AI is the most tightly integrated end-to-end ML platform — data preparation, training, fine-tuning, deployment, monitoring in one unified experience. Gemini models are natively integrated throughout the GCP stack. For organisations training proprietary large models, GCP's AI infrastructure is the most technically sophisticated.

Pricing: what the numbers actually mean

The on-demand compute pricing across the three providers is remarkably similar. The differences that matter in practice are not in the per-hour compute rates — they're in:

Data egress costs. All three providers charge for data leaving their network. GCP is cheapest ($0.08/GB), AWS is most expensive ($0.09/GB) at standard tiers. At high data volumes, this difference is meaningful. At typical application scale, it rarely drives the decision.

Reserved/committed use pricing. All three offer significant discounts for committed usage: AWS Reserved Instances (1 or 3 year), Azure Reserved VM Instances, and GCP Committed Use Discounts. GCP's sustained use discounts apply automatically without commitment — a genuine operational simplification.

Hidden costs. This is where teams get hurt. Cross-AZ traffic, NAT gateway costs, data transfer between services, and support plan costs add up significantly. AWS has the most complex pricing surface and the most opportunities for unexpected bills. GCP's pricing is generally more transparent and predictable.

Startup programs:

- AWS Activate: up to $100,000 in credits for qualified startups

- Azure for Startups: up to $150,000 in credits

- Google for Startups: up to $200,000 in credits plus TPU access

If you're pre-revenue or early-stage, the startup credit programmes can meaningfully extend your runway and defer the provider decision until you have more information about your actual workload patterns.

Multi-cloud: the real answer for 89% of enterprises

About 89% of enterprises use two or more cloud providers in 2026. Tools like Terraform and Pulumi help manage infrastructure across providers. The main challenge is data transfer costs between clouds.

Multi-cloud is not something to plan for from day one. The operational complexity of managing infrastructure across multiple providers — different IAM systems, different networking primitives, different billing models — is real and adds overhead that early-stage teams don't need.

The pattern that works: start on one primary provider for everything. As specific workloads reach scale or have specific requirements, evaluate whether a secondary provider is the right tool. Common examples:

- Primary on AWS, BigQuery on GCP — AWS for application workloads, BigQuery for analytics because no AWS equivalent matches it for cost and simplicity at petabyte scale

- Primary on Azure, S3 on AWS — Azure for applications and Microsoft integration, S3 for static asset storage where the AWS CDN and global availability is advantageous

- Primary on GCP, Azure OpenAI for AI features — GCP for infrastructure and data, Azure OpenAI for enterprise GPT-5 access with data governance

Abstract your infrastructure with Terraform or Pulumi from day one — not to enable multi-cloud immediately, but to preserve the option without being locked into provider-specific tooling.

Decision framework: which platform for which team

Choose AWS if:

- Your team has no strong existing cloud preference and wants maximum optionality

- You need the broadest catalogue of managed services, particularly for niche or specialised requirements

- Hiring is a priority and you want to recruit from the largest pool of certified cloud engineers

- You're in a regulated industry (financial services, healthcare, government) with extensive compliance requirements

- You need flexible AI inference at scale and want to choose from multiple foundation models via Amazon Bedrock

- You want the most mature, most documented ecosystem with the most third-party integrations

Choose Azure if:

- Your organisation already runs Microsoft 365, Active Directory, SQL Server, or significant Windows infrastructure

- You need enterprise access to OpenAI models (GPT-4o, GPT-5) with private networking and data residency guarantees

- You're in a regulated industry and Azure's compliance certification count matters for your audit requirements

- You have on-premises infrastructure that needs to integrate with cloud in a hybrid model

- Your development team works primarily in .NET, C#, or builds on Microsoft developer tooling

Choose GCP if:

- Your product is data-intensive and BigQuery is a natural fit for your analytics layer

- You're running containerised workloads at scale and want the best-in-class Kubernetes experience (GKE)

- You're training large custom models and need TPU access for cost-effective training at scale

- Your team values clean documentation, engineering excellence, and a more opinionated platform over maximum breadth

- You're a startup that wants to maximise startup credits and keep compute costs low

- Your application is globally distributed and consistent low latency across regions is a performance requirement

Choose none of the above first if:

You're very early stage and not yet sure what your workload looks like. In that case, start with the free tier on the provider your team knows best, build something, and let actual usage patterns inform your decision. The startup credit programmes on all three providers give you room to experiment without committing prematurely.

The honest summary

The cloud wars are increasingly a battle of ecosystems, not raw capabilities. All three platforms can run your application. All three will keep your data safe. All three will scale with you. The differences that actually drive the decision in 2026 are:

Team expertise — the platform your team already knows will outperform a technically superior platform they don't. Skills matter more than features.

Ecosystem integration — for Microsoft shops, Azure's integration density with existing tools is a genuine productivity advantage. For data-heavy teams, BigQuery's analytics capabilities on GCP are hard to replicate elsewhere.

AI strategy — if OpenAI models with enterprise governance are central to your product, Azure is the only path. If custom model training at scale is your requirement, GCP's TPU infrastructure is the most cost-effective. If you want maximum model choice and flexibility, AWS Bedrock is the right starting point.

Long-term cost at your specific scale — run realistic cost models for your actual workload. The 5–10% pricing differences in compute rarely drive decisions, but egress costs, reserved instance strategies, and hidden fees can.

The worst decision is paralysis — waiting to choose until you have more information that will only come from actually building. Pick the platform that fits your team's existing skills and ecosystem, start small, and optimise based on what you learn.

Frequently asked questions

Which cloud provider is cheapest — AWS, Azure, or GCP? GCP is typically 5–10% cheaper for compute at equivalent specification, and cut pricing a further 8% across all regions in Q1 2026. However, compute pricing is rarely the largest cost driver at scale — data egress, managed service costs, and whether you're using reserved/committed pricing matter more. Run a realistic cost model for your actual workload rather than comparing on-demand compute rates in isolation.

Which cloud is best for AI and machine learning in 2026? It depends on your AI use case. Azure is the only provider offering enterprise access to OpenAI's GPT-5 with private networking and data governance controls. GCP leads for custom model training with TPU v6 hardware and the most integrated end-to-end ML platform in Vertex AI. AWS offers the broadest GPU selection and the widest choice of foundation models through Amazon Bedrock. Most teams with serious AI requirements end up with a primary provider and secondary AI-specific tooling from another.

Is multi-cloud worth the complexity? At enterprise scale, yes — 89% of enterprises use two or more cloud providers. At startup scale, usually no. The operational overhead of managing multiple IAM systems, networking configurations, and billing models adds friction that early-stage teams don't need. Start on one provider, use Terraform to abstract your infrastructure, and add secondary providers only when a specific workload has a clear requirement that your primary provider can't meet well.

Which cloud is growing fastest? Azure is growing fastest in absolute dollar terms, driven by Microsoft 365 enterprise customers and OpenAI integration. GCP is growing fastest as a percentage, with the fastest year-over-year revenue growth rate of the three providers. AWS remains the largest by revenue and market share but is growing more slowly than its competitors.

Can I switch cloud providers later? Yes, but it's expensive and time-consuming. Cloud providers are designed to keep you on their platform — managed services, proprietary APIs, and egress costs all create switching costs. The best strategy is to abstract your infrastructure with Terraform or Pulumi from day one and minimise your use of provider-specific services where cloud-agnostic alternatives exist (for example, using self-managed Kafka instead of a provider-specific managed queue). But don't over-engineer for portability before you know what you actually need.

Which cloud is best for startups? GCP's startup credit programme ($200,000) is the most generous, and GCP's pricing is most competitive at lower scale. AWS Activate and Azure for Startups also offer substantial credits. For most technical startups without a specific ecosystem requirement, the deciding factor should be team familiarity — the platform your founders and early engineers know well will be the most productive platform in the first 12–18 months.

What is the difference between EKS, AKS, and GKE? All three are managed Kubernetes services. GKE (Google Kubernetes Engine) is widely considered the most mature and feature-complete — built by the team that created Kubernetes and runs the world's largest Kubernetes deployments. EKS (AWS) and AKS (Azure) are both solid production options. GKE's Autopilot mode, which manages nodes automatically and charges only for pod resources rather than node capacity, has no direct equivalent in EKS or AKS.

Iria Fredrick Victor

Iria Fredrick Victor(aka Fredsazy) is a software developer, DevOps engineer, and entrepreneur. He writes about technology and business—drawing from his experience building systems, managing infrastructure, and shipping products. His work is guided by one question: "What actually works?" Instead of recycling news, Fredsazy tests tools, analyzes research, runs experiments, and shares the results—including the failures. His readers get actionable frameworks backed by real engineering experience, not theory.

Share this article:

Related posts

More from Devops

May 13, 2026

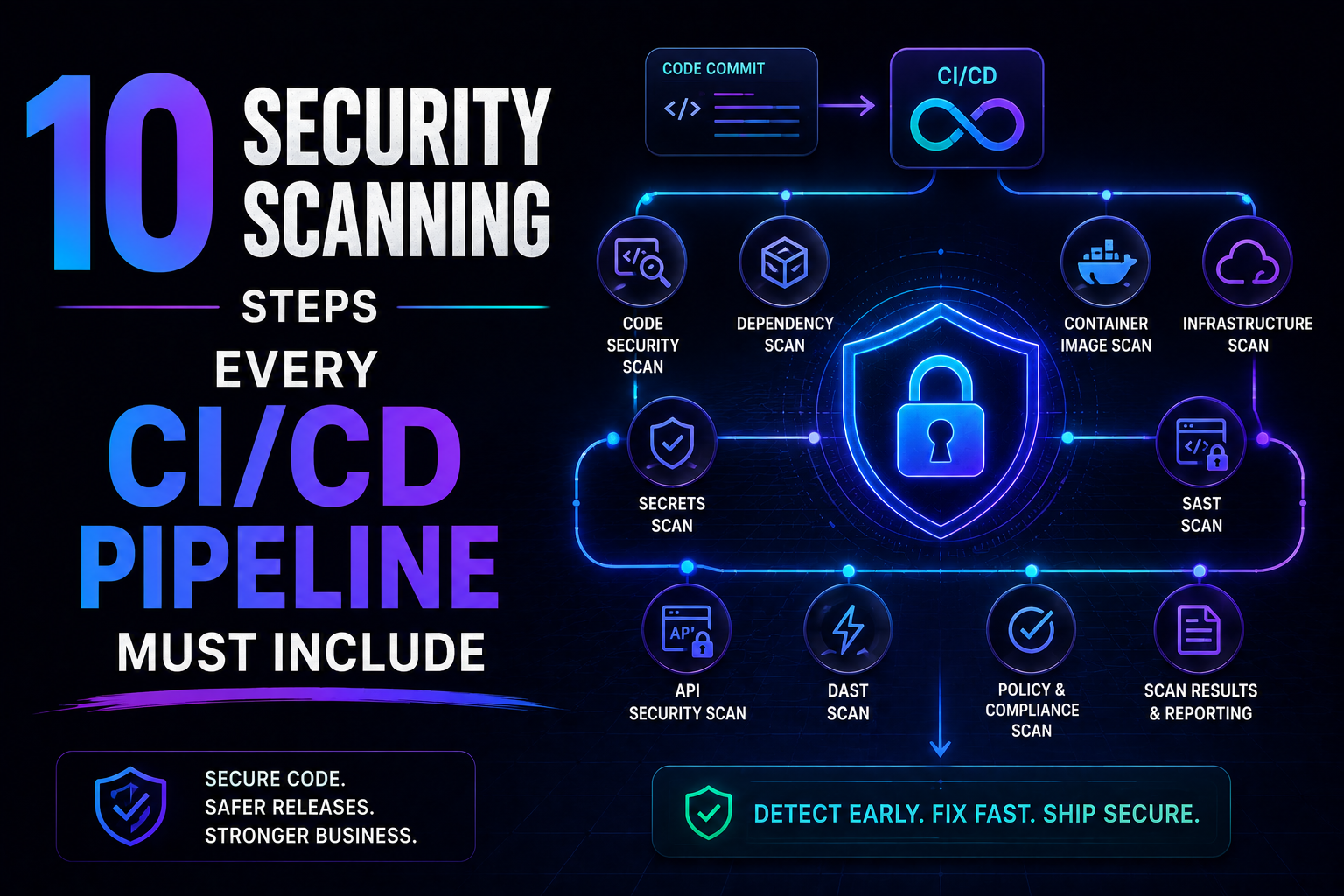

7SAST, SCA, container scanning, DAST, runtime verification – 10 security steps every CI/CD pipeline needs in 2026. Open source tools, real examples, and a rollout timeline. Stop shipping vulnerabilities.

May 5, 2026

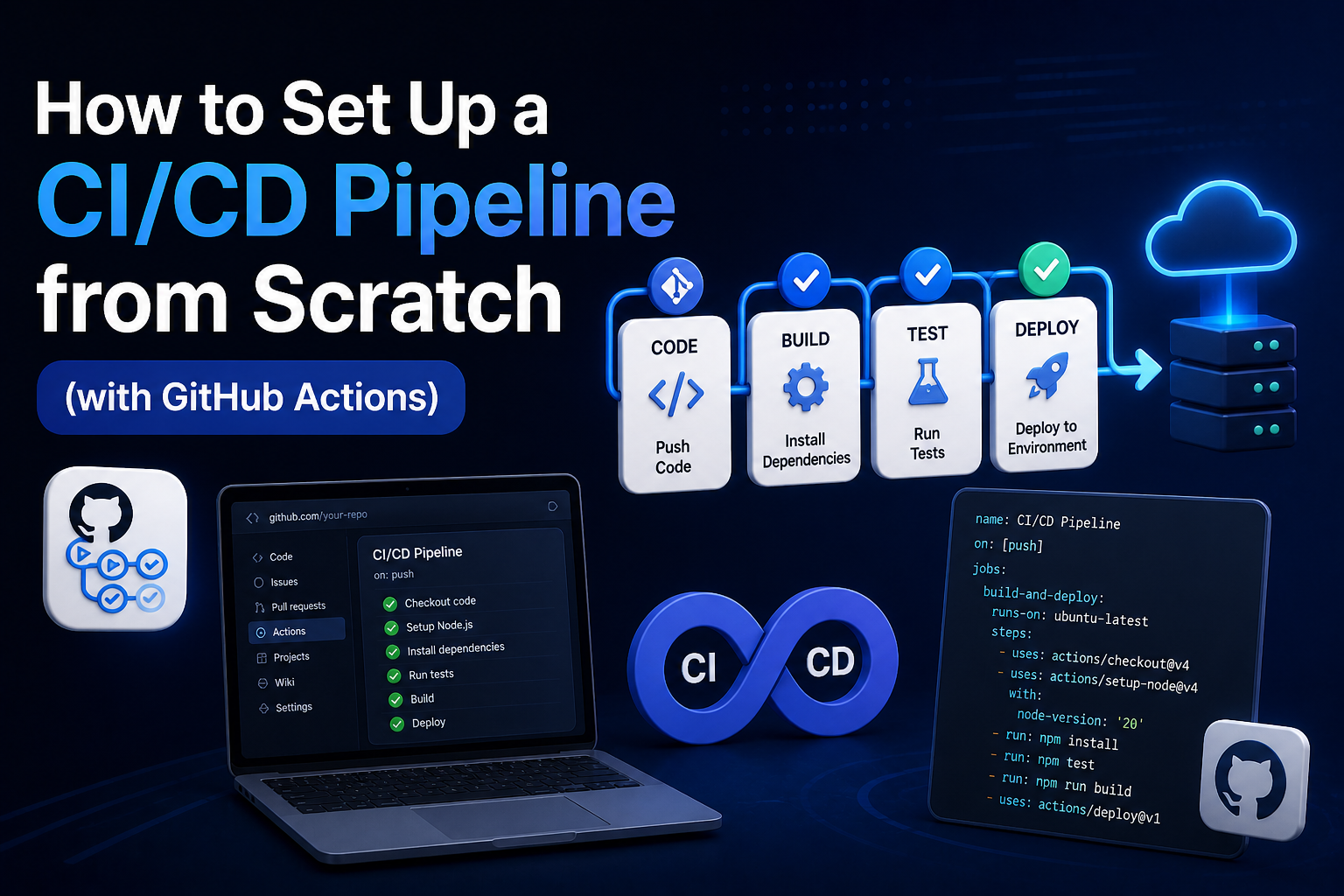

78Learn how to set up a production-ready CI/CD pipeline from scratch with GitHub Actions — full annotated YAML for linting, testing, Docker builds, security scanning, staging deployment, and production approval gates.

May 4, 2026

74Learn the 10 DevOps best practices that reduce deployment failures — covering CI/CD, IaC, canary releases, observability, rollbacks, and DORA metrics with real tool recommendations.