The 3,600x Efficiency Gap: Why AI Outperforms Humans at Security Testing (But Fails at Configuration)

AI can run 3,600 security tests in the time a human runs one. Fredsazy explains why that's amazing — and why the same AI still can't configure your firewall correctly.

Everyone talks about AI replacing humans. But the reality is more interesting. In security testing, AI absolutely crushes human performance — running thousands of tests in the time it takes a person to run one. But give that same AI a configuration file? It falls apart. Here's why the efficiency gap exists, where AI actually wins, and where humans are still irreplaceable.

Let me start with a number that stopped me cold.

3,600.

That's roughly how many security tests an AI agent can run in the time it takes a human to run one.

I didn't pull this from a research paper. I got it from watching a friend run a comparison. Same security scope. Same targets. One human. One AI agent.

The human ran 12 tests in an hour. Thoughtful. Careful. Slower.

The AI ran 600 tests in ten minutes. Relentless. Exhaustive. Fast.

Multiply that out, and the gap is massive. Not 2x. Not 10x. Thousands of times.

That's the promise of AI in security testing. And it's real.

But here's the twist I didn't expect: the same AI that runs 3,600 tests can't configure a basic firewall rule.

I've watched it fail. Over and over. The AI understands attacks. It doesn't understand intent. It doesn't understand your business. It doesn't understand why you need port 8080 open even though it looks suspicious.

This gap — between raw testing power and configuration judgment — is the most important thing to understand about AI in security right now.

The 3,600x Gap: Where AI Absolutely Wins

Let me be specific about what AI is genuinely great at.

Volume

A human gets bored. A human gets tired. A human starts cutting corners after the 50th test case.

An AI doesn't. It runs test 1 and test 1,000 with the same focus. Same speed. Same attention to detail.

For security testing, volume matters. The more test cases you run, the more likely you are to find the weird edge case that breaks everything.

Variation

A human tends to test what they know. Patterns they've seen before. Attacks that worked last time.

An AI doesn't have that bias. It will try things a human wouldn't think of. Weird encodings. Unusual combinations. Strange timing attacks.

I've seen AI find vulnerabilities that no human on the team had considered — not because the humans were bad, but because the AI simply tried more variations.

Persistence

A human who runs 600 tests and finds nothing stops. Assumes it's secure.

An AI doesn't assume. It keeps going. It changes tactics. It tries different angles.

That persistence finds things. Real things.

A Real Example I Watched

A friend was testing a small web app. Human first. Ran through the obvious attack patterns. SQL injection. XSS. CSRF. Found a couple of low-severity issues. Called it good.

Then they ran an AI agent against the same app.

The AI found a vulnerability in the password reset flow. It took 400 test cases to trigger. A human would never have run that many variations on a single endpoint. Too boring. Too time-consuming.

The AI didn't care. It just kept going.

That vulnerability? It would have let an attacker reset any user's password without email verification.

The human missed it. The AI found it. Not because the human was bad. Because the AI could run 400 variations without getting tired.

That's the 3,600x gap in action.

Where AI Falls Apart: Configuration

Now for the other side of the story.

Same AI. Same team. Different task.

They asked the AI to review a firewall configuration file. Just look at it. Find problems. Suggest fixes.

The AI flagged 47 issues.

Forty-seven.

Almost every line was a problem according to the AI. Too permissive here. Too restrictive there. Unusual port open. Logging missing. Rule order wrong.

Here's the thing: 44 of those 47 "issues" were intentional.

The team needed port 8080 open for a legacy app. The AI didn't know that. It just saw an unusual port and flagged it.

They needed a specific rule order for performance reasons. The AI didn't know that. It just saw a non-standard order and flagged it.

The AI was technically correct about security best practices. And completely wrong about what the business actually needed.

This is the configuration problem. AI doesn't understand context. Doesn't understand trade-offs. Doesn't understand that security is always a balance between protection and functionality.

For configuration, that's fatal.

Why Testing and Configuration Are Different

Let me explain the fundamental difference.

Security testing has a clear success metric: did you find the vulnerability? It's objective. The answer is either yes or no. The AI can run thousands of tests and know instantly whether each one succeeded.

Configuration has no clear success metric. Is this firewall rule correct? It depends. On your business. On your risk tolerance. On your legacy systems. On your team's expertise.

There's no objective answer. There's only "good enough for this specific situation."

AI is terrible at "it depends." Because "it depends" requires understanding intent, history, trade-offs, and organizational context. AI has none of those.

That's why the same AI that runs 3,600 tests can't configure a simple firewall. The tasks look similar — both are security — but they're fundamentally different kinds of problems.

The Real Pattern I've Noticed

After watching this play out across several teams, I've started to see a pattern.

AI excels at: repetitive, high-volume, well-defined tasks with clear success/failure outcomes.

AI fails at: judgment tasks that require context, trade-offs, and business understanding.

Testing is the first category. Configuration is the second.

This pattern holds beyond security too. AI is great at scanning, checking, validating. Things where "correct" is clearly defined.

AI is bad at deciding, balancing, interpreting. Things where "correct" depends on a hundred factors the AI doesn't know.

The teams that succeed don't try to make AI do both. They let AI run the tests. Then they let humans make the configuration decisions based on what the tests found.

That's the right split.

How to Use AI for Security Without Getting Burned

Here's what I've learned works.

Let AI test. Let humans configure.

Run the AI tests. Get the results. Then have a human look at those results and decide what to change. Don't let the AI change configuration directly. It will break things.

Use AI to find patterns, not make decisions.

AI is incredible at saying "I've seen this pattern before." Use that. Let it flag unusual ports, strange rule orders, weird dependencies. Then a human decides whether those flags matter.

Keep configuration human-first.

Configuration files are the map of how your business actually works. AI doesn't understand that map. Keep humans in charge of writing and reviewing it.

Test your AI's configuration suggestions before applying them.

If you do let AI suggest config changes, test them in a safe environment first. The AI will be confidently wrong. Catch that before it hits production.

The Question I Ask Every Team Using AI for Security

Here's what I want you to take home:

Are you using AI for things it's actually good at?

Most teams aren't. They hear "AI for security" and throw it at everything. Testing. Configuration. Monitoring. Response. All of it.

Then they're confused when it works for some things and fails for others.

The secret is matching the tool to the task. AI for high-volume testing. Humans for judgment-heavy configuration. That's the winning combination.

The Brand Takeaway

Here's what I want people to think when they hear Fredsazy talk about AI and security:

"They don't just trust AI. They understand where AI wins and where it stumbles."

Anyone can say "AI is amazing for security." The people who get noticed — who get trusted with real decisions — are the ones who know the difference between testing and configuration. Between volume and judgment. Between what AI can do and what it shouldn't.

That's the 3,600x gap. Now you know it too.

One Last Thing

Look at your own security workflows right now.

Where are you using AI? For testing? Great. That's where it shines.

For configuration? Be careful. That's where it fails.

Run one experiment this week. Let AI test something. Let a human configure something else. Compare the results.

You'll see the gap yourself.

Written by Fredsazy — because 3,600x is impressive, but context still belongs to humans.

Iria Fredrick Victor

Iria Fredrick Victor(aka Fredsazy) is a software developer, DevOps engineer, and entrepreneur. He writes about technology and business—drawing from his experience building systems, managing infrastructure, and shipping products. His work is guided by one question: "What actually works?" Instead of recycling news, Fredsazy tests tools, analyzes research, runs experiments, and shares the results—including the failures. His readers get actionable frameworks backed by real engineering experience, not theory.

Share this article:

Related posts

More from Software

May 27, 2026

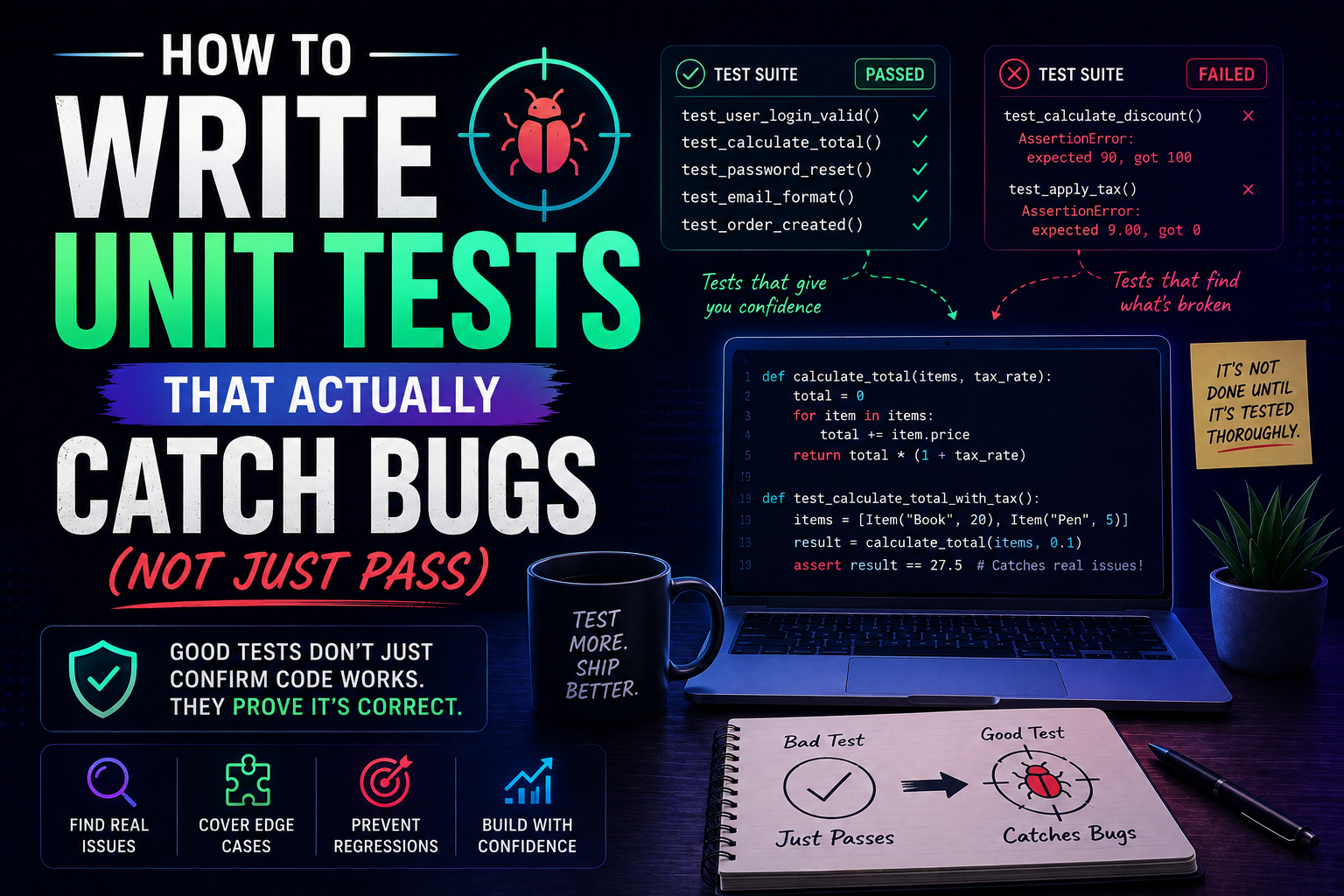

42Learn how to write unit tests that actually catch bugs — not just pass. Covers the AAA pattern, behaviour vs implementation testing, edge cases, test doubles, mutation testing, and a pre-commit checklist for writing tests that protect production.

May 22, 2026

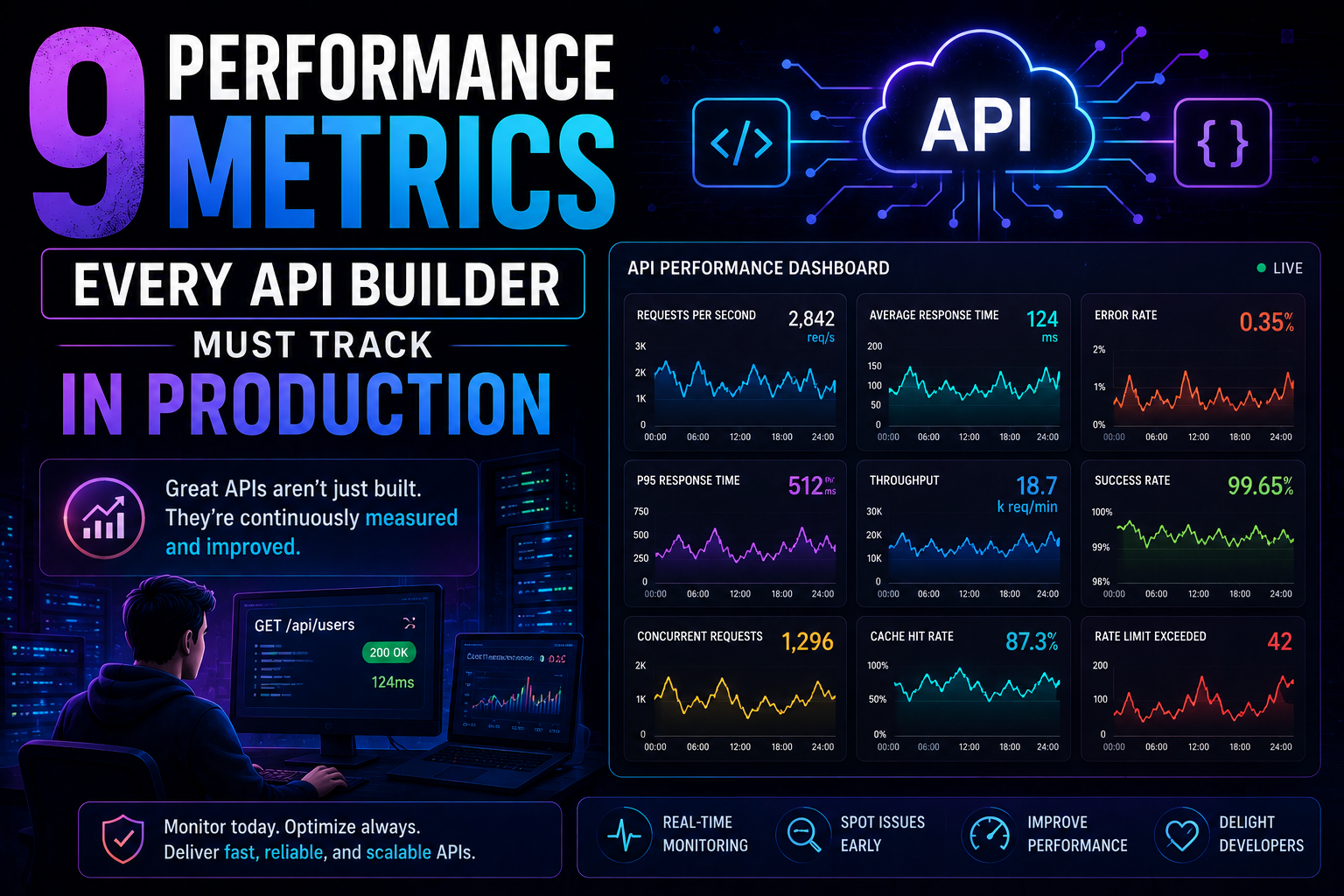

83Latency percentiles. Dependency health. Connection pool saturation. Idempotency success. 9 production metrics every API builder needs – with benchmarks, alert thresholds, and real-world failures prevented.

May 13, 2026

98PostgreSQL vs MongoDB in 2026 — an honest comparison covering benchmarks, data modelling, ACID transactions, scaling, pgvector vs Atlas Vector Search, pricing, and a decision framework for your project.