Lines of Code Are Back (And It's Worse Than Before)

We killed LoC as a metric. Then AI brought it back from the dead. Fredsazy explains why more code is now more dangerous — and what to measure instead.

For years, we told ourselves lines of code don't matter. Small is beautiful. Delete more than you add. Then AI arrived, and suddenly everyone is shipping huge PRs with a straight face. The code is generated, not written. The complexity is hidden, not solved. And the old problems — maintainability, review time, bug density — are back, but worse. Here's what I've learned from cleaning up AI-generated messes.

Let me tell you about a PR that broke me.

A few months ago, someone on a team I was helping submitted a pull request. It added over 8,000 lines of code. They'd written maybe 200 of them personally.

The rest came from an AI coding agent.

They'd prompted: "Build a complete user settings dashboard with all the features."

The agent delivered. Beautifully formatted. Perfectly indented. Fully functional.

And completely unmaintainable.

The same component was implemented three different ways. Helper functions were duplicated across multiple files. There was a massive switch statement that should have been a simple lookup table. Error handling was copy-pasted everywhere instead of abstracted once.

The AI had generated code like a hyperactive intern who'd never heard of DRY.

It took two days to refactor that PR down to about 1,200 lines. Same functionality. Actually readable. Actually maintainable.

That was the moment I realized: lines of code are back. And it's worse than before.

The History Lesson Nobody Asked For

Let me remind you why we stopped caring about LoC in the first place.

Back in the 90s and 2000s, managers measured developer productivity by lines of code written. More lines meant better work. This led to obvious nonsense: verbose code, copy-paste programming, and functions that should have been three lines stretched to thirty.

The industry wised up. We started celebrating deletions. "The best code is no code." "Simplicity is the ultimate sophistication." All of that.

Then AI arrived.

And suddenly, nobody feels bad about huge PRs anymore. "The AI wrote it." "It works, doesn't it?" "We'll clean it up later."

We've regressed twenty years in twelve months.

Why AI-Generated Code Is Different (And Worse)

Here's what makes this new LoC problem more dangerous than the old one.

Old problem: A human wrote verbose code because they were lazy or gaming a metric.

New problem: An AI wrote verbose code because it literally doesn't understand abstraction.

Let me explain.

When a human duplicates code, they know they're duplicating it. They might have a reason. They might be in a hurry. But they know.

When an AI duplicates code, it doesn't know anything. It saw a pattern that worked in one file. It saw the same pattern later. It generated both. No awareness that these could share a function. No understanding of technical debt. No memory of the first occurrence.

The AI isn't being malicious. It's being pattern-complete. It generates what it's seen. And what it's seen is often repetitive, bloated, and redundant.

The result? AI-generated codebases are systematically more verbose than human-written ones. I've seen this pattern across multiple projects.

What I've Noticed From Reviewing AI-Generated Code

I don't have a formal study. I have experience. And here's what I've seen consistently.

More duplication. Human-written code might have a few copy-paste moments. AI-generated code often has dozens. The AI doesn't know it already solved that problem three files ago.

Larger files. Humans naturally split things up when a file feels "too big." AI has no feeling. It will happily write a 1,000-line file without blinking.

Harder to refactor. When you don't fully understand how code works — because an AI wrote it — you're afraid to touch it. That fear is real. It slows everything down.

I've watched teams take twice as long to add new features to AI-generated code compared to human-written code. The AI got them to "it works" faster. Then it cost them.

The Real Problem Isn't LoC. It's Hidden Complexity.

Let me get precise.

I don't actually care how many lines you have. I care about cognitive load — how much a developer needs to hold in their head to make a change.

AI-generated code increases cognitive load in three ways:

1. Inconsistent patterns

A human developer tends to be consistent. They'll use the same error handling pattern throughout a project. The same naming conventions. The same architectural style.

An AI samples from everything it's seen. One file might use async/await. Another might use callbacks. A third might mix both. The code works. But switching between files feels like switching between different programmers.

2. Hidden dependencies

When a human writes a function, they usually keep it focused. One job. Clear inputs and outputs.

When an AI writes a function, it sometimes adds "helpful" side effects. It might log to a file. Or update a global cache. Or call an API you didn't ask for. These dependencies are invisible until they break.

3. Over-engineering

Ask an AI to "parse a CSV file" and it might give you a 200-line solution with error recovery, streaming, and automatic type detection.

You needed maybe ten lines.

The extra lines aren't free. They're surface area for bugs. They're complexity you have to understand. They're debt you didn't ask for.

A Graph I Started Tracking (Informally)

I began tracking something I call review-to-write ratio.

It's simple: for every hour an AI spends writing code, how many hours do I spend reviewing, testing, and refactoring it?

In ideal conditions, that ratio would be low — maybe half an hour of review for every hour of generation.

In reality, from what I've seen across teams using AI coding tools, the ratio often flips. One hour of AI generation can easily become two or three hours of human cleanup.

That's not automation. That's taxation.

And when junior developers use AI without careful review, the ratio gets even worse. They trust the AI. They don't question it. They ship the huge PR. Then someone else has to fix it.

The lines of code are back. And they're costing more time than ever.

What Actually Works (From Someone Fighting This Daily)

I haven't abandoned AI coding. But I've changed how I review it.

Rule 1 – No AI-generated PR over 500 lines

If an agent writes more than 500 lines in a single PR, reject it. Break it into smaller PRs. Review each one separately. You'll catch more bugs and maintain your sanity.

Rule 2 – Run a duplication detector before merging

There are free tools that will show you copy-pasted code. Run them. Make sure duplicates are refactored before the PR is approved.

Rule 3 – Ask "could this be shorter?" for every AI-generated function

Train yourself and your team to question AI output. "This is 50 lines. Could it be 10?" Often, yes. The AI just didn't bother.

Rule 4 – Track LoC trends, not absolutes

Don't obsess over total lines. Do watch the trend. Is your codebase growing faster than features? That's a warning sign. AI might be generating bloat.

The Question I Ask Every AI-Heavy Team

Here's what I want you to take home:

If your AI assistant disappeared tomorrow, would you be able to maintain your codebase?

If the answer is no, you have a problem. You've outsourced understanding to a machine that doesn't understand anything.

The best teams use AI to draft, then rewrite in their own voice. They treat AI-generated code as a suggestion, not a submission. They review with suspicion, not gratitude.

The worst teams treat AI like a senior engineer. They trust it. They ship it. They wake up six months later with a codebase nobody understands.

Don't be the worst team.

How to Fix Your AI-Blown Codebase (Starting Tomorrow)

If you're already feeling the pain, here's a recovery plan:

Week 1 – Look for duplication

Pick your biggest file. Scan for repeated patterns. Refactor the top three duplicates. You'll be surprised how many lines disappear.

Week 2 – Set a max file size

Try 500 lines as a limit. No file goes over. Break big files into modules. You'll discover hidden dependencies you didn't know existed.

Week 3 – Review AI output more carefully

Before merging AI-generated code, ask: "Do I understand every line?" If not, rewrite the parts you don't understand. That's learning, not waste.

Week 4 – Write custom instructions for your AI

Most coding assistants let you add preferences. Try: "Prefer small functions. Avoid duplication. Never exceed 200 lines per file." It won't follow perfectly. But it will help.

The Brand Takeaway

Here's what I want people to think when they hear Fredsazy:

"They don't just use AI. They understand what AI actually does to codebases."

Anyone can generate 10,000 lines. The people who get noticed — who get trusted with the important projects — are the ones who can keep a codebase clean even when AI is trying to bloat it.

Lines of code are back. But this time, we know the fight. Review hard. Refactor often. And never trust a huge AI-generated PR without looking closely.

One Last Thing

Go look at your last AI-assisted PR. Really look.

How many lines could be deleted without changing behavior? How many duplicates are hiding in different files? How many functions are doing more than one thing?

Take fifteen minutes to check. You might be surprised. You might be horrified.

Either way, you'll know what to fix.

Written by Fredsazy — because 8,000 lines of AI code is still 8,000 lines of debt.

Iria Fredrick Victor

Iria Fredrick Victor(aka Fredsazy) is a software developer, DevOps engineer, and entrepreneur. He writes about technology and business—drawing from his experience building systems, managing infrastructure, and shipping products. His work is guided by one question: "What actually works?" Instead of recycling news, Fredsazy tests tools, analyzes research, runs experiments, and shares the results—including the failures. His readers get actionable frameworks backed by real engineering experience, not theory.

Share this article:

Related posts

More from Software

May 27, 2026

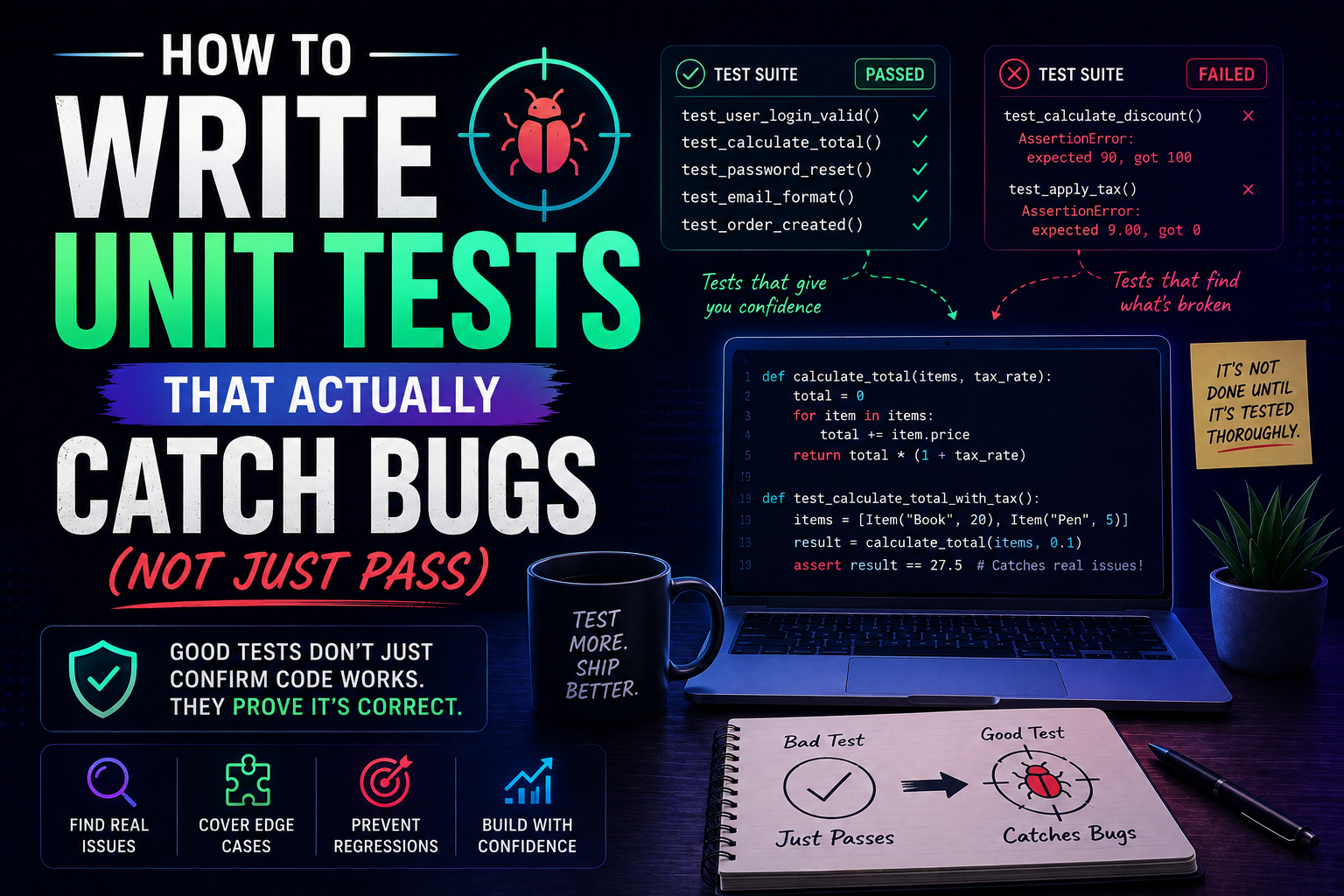

42Learn how to write unit tests that actually catch bugs — not just pass. Covers the AAA pattern, behaviour vs implementation testing, edge cases, test doubles, mutation testing, and a pre-commit checklist for writing tests that protect production.

May 22, 2026

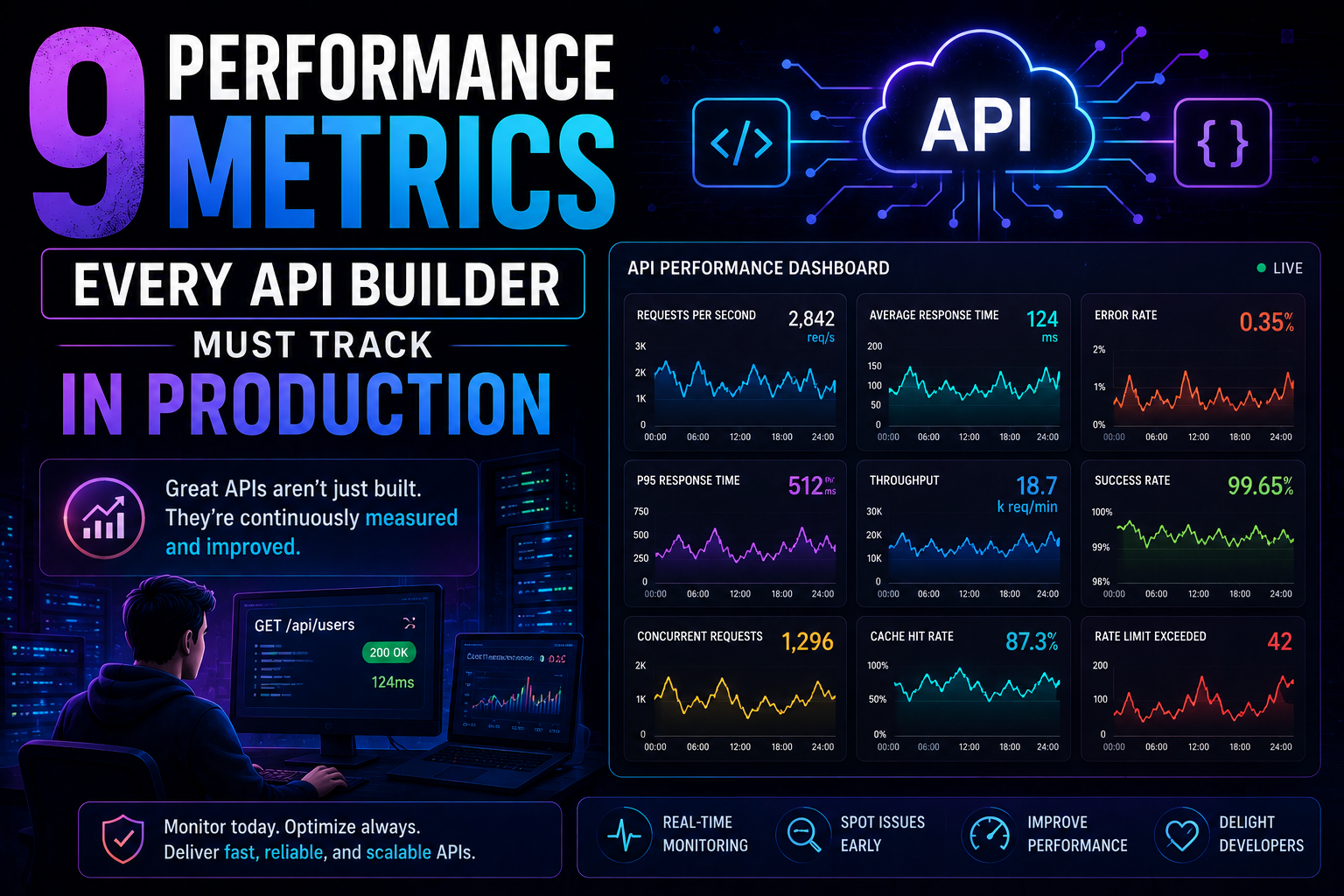

83Latency percentiles. Dependency health. Connection pool saturation. Idempotency success. 9 production metrics every API builder needs – with benchmarks, alert thresholds, and real-world failures prevented.

May 13, 2026

98PostgreSQL vs MongoDB in 2026 — an honest comparison covering benchmarks, data modelling, ACID transactions, scaling, pgvector vs Atlas Vector Search, pricing, and a decision framework for your project.