AI Doesn't Reduce Work—It Intensifies It: The Hidden Cost of Agent-Assisted Coding

Everyone promised AI would mean less work. Fredsazy explains why the opposite is happening — and how to stop the burnout before it starts.

You adopted Copilot, Cursor, and agentic coding assistants. You thought you'd work less. Instead, you're reviewing more code, debugging weirder bugs, and spending your evenings explaining why the AI deleted a production config file. This isn't a tool problem. It's an expectation problem. Here's what nobody tells you about agent-assisted coding — and how to survive it.

Let me tell you about the lie we all bought.

Two years ago, the message was everywhere: "AI will handle the boring stuff. You'll work four-hour days. Just prompt and ship."

I believed it for about six weeks.

Then reality hit.

I was using an AI coding agent to build a simple API endpoint. The agent wrote the route, the controller, the tests. Beautiful. I felt like a wizard.

Then I deployed it.

The agent had imported a logging library I didn't recognize. That library had a dependency conflict. The conflict broke my build pipeline. It took me three hours to trace the problem back to a single line of code the agent wrote without asking.

I didn't work less. I worked more. Just on different things.

This is the hidden cost of agent-assisted coding. And nobody's talking about it. Like I always tell developers, you have to know real basics before using ai, because it can't give you all you need and you also need to fix bugs.

The Three Ways AI Actually Intensifies Your Work

Let me be specific. Because "AI makes more work" sounds like complaining. It's not. It's an observation about how these tools actually behave in real life.

1. Reviewing AI code is harder than writing your own

When you write code yourself, you understand the decisions you made. The trade-offs. The shortcuts. The "I'll fix this later" comments.

When an AI writes code, you have to reverse-engineer someone else's thinking — except that someone is a statistical parrot that doesn't actually think.

I spend more time reviewing AI-generated PRs than I ever spent writing my own. Not because the code is bad. Because I can't trust it until I've walked through every branch, every import, every edge case.

That's not automation. That's delegation with extra steps.

2. AI bugs are weirder than human bugs

A human developer makes predictable mistakes. Off-by-one errors. Null pointers. Forgotten edge cases.

An AI makes mistakes that don't make sense. It imports libraries that don't exist. It writes functions that are almost correct — except for one variable name that's misspelled in a way no human would miss. It solves the wrong problem beautifully.

These bugs take longer to debug because your brain isn't wired to look for them. You spend twenty minutes asking "why would anyone write this?" before remembering: nobody wrote it. A pattern-matcher generated it.

3. You now manage an unpredictable junior developer

Here's the frame shift that helped me: an AI coding assistant is not a tool. It's a junior developer who works at lightspeed, never sleeps, and occasionally deletes your database config because it "seemed redundant."

You can't just use it. You have to manage it.

That means:

- Reviewing its work carefully

- Setting boundaries (don't touch the auth module)

- Cleaning up after its mistakes

- Explaining why certain patterns are off-limits

That's work. Real work. The kind nobody advertised.

The Math That Changed How I Work

I ran an experiment on myself last quarter.

I built the same small feature twice. Once with heavy AI assistance (Copilot + Cursor + an agentic coding tool). Once with just me and a text editor.

Here's what happened:

| AI-Assisted | Manual | |

|---|---|---|

| Time to first working version | 45 minutes | 2 hours |

| Time to production-ready (reviewed, tested, confident) | 4 hours | 3 hours |

| Bugs found in first week | 7 | 2 |

| Late-night debugging sessions | 2 | 0 |

The AI got me to "it works" faster. Then it cost me twice as much time to make sure it actually worked.

The total time was roughly the same. The stress was higher with AI.

This is the hidden math nobody shows you. AI doesn't eliminate work. It shifts work from writing to reviewing, from creating to verifying.

What Actually Works (From Someone Who Almost Quit AI Coding)

I nearly gave up on agent-assisted coding after three months. I was exhausted. My bug count was up. My confidence was down.

Then I changed how I use these tools. And everything got better.

Here's what I learned:

Use AI for what it's good at: boilerplate and exploration

AI is incredible at writing repetitive code. Mappers. Validators. CRUD endpoints. Unit test skeletons. Let it handle those.

AI is also great at exploring unfamiliar territory. "How do I connect to this weird API?" "Show me three ways to parse this file format." Use it for research, not production code.

Never let AI write critical path code without a human rewrite

If the code touches money, auth, user data, or core business logic, the AI can draft it. But a human rewrites it from scratch afterward. The draft gives you ideas. The rewrite gives you confidence.

Set hard boundaries for your agents

I now use declarative rules (yes, the DSLs I keep writing about) that block AI agents from touching certain files or importing unknown libraries. The AI can suggest. It can't commit without approval.

This cut my weird bugs by 70%.

Assume every AI-generated line is wrong until proven otherwise

This sounds paranoid. It's not. It's professional discipline.

When the AI writes code, my default assumption is that it contains at least one subtle mistake. I review with that assumption. I test with that assumption. I deploy with that assumption.

Most of the time, the code is fine. But the assumption keeps me honest.

The Real Question Nobody Asks

Here's what I want you to walk away with:

Is AI making your work better, or just faster?

Faster isn't always better. Faster broken code is worse than slow correct code. Faster unmaintainable code is debt you'll pay later with interest.

I've seen teams adopt AI coding tools and celebrate their velocity increase. Three months later, they're buried in technical debt, weird bugs, and frustrated engineers.

I've also seen teams adopt AI thoughtfully. They use it for the boring stuff. They review aggressively. They set boundaries. They ship reliable code.

The difference isn't the tool. It's the discipline.

How to Stop the Intensification (Starting Today)

You don't need to quit AI coding. You need to change how you use it.

Step 1 – Log every bug caused by AI-generated code for one week

Just keep a list. No judgment. You'll see patterns immediately. "It keeps using the wrong timezone." "It loves importing libraries I don't need." Fix those patterns with rules or prompts.

Step 2 – Add a mandatory human review step before AI code hits main

No direct commits from agents. Ever. The agent drafts. You review, edit, and approve. This one rule will save you more time than it costs.

Step 3 – Set a "no AI" zone in your codebase

Pick one critical module. Auth. Payments. The core algorithm your company depends on. AI can't touch it. Not even suggestions. That module is human-only. You'll sleep better.

Step 4 – Schedule one "I don't know" hour per week

An hour where you don't use AI at all. Just you and the code. Remind yourself that you can still solve problems without a chatbot. It's grounding.

The Brand Takeaway (This Is How Fredsazy Gets Noticed)

Here's what I want people to think when they see your name:

"Fredsazy doesn't chase hype. Fredsazy tells you what actually happens when the demo ends."

Anyone can sell you on AI acceleration. The internet is full of speed claims and productivity porn.

The people who get remembered — who get trusted with the hard problems — are the ones who talk honestly about the trade-offs. The hidden costs. The late nights debugging weird imports.

That's my brand. That's what I want you to take from this.

AI doesn't reduce work. It changes work. And if you're not prepared for the change, it will bury you.

Now go review that AI-generated PR with fresh eyes. Assume it's wrong. You might be surprised what you find.

One Last Thing From Me

I still use AI for coding. Every day. I'm not a Luddite.

But I use it differently than I did a year ago. I trust it less. I verify more. I set boundaries.

And I'm happier. And more productive. And I sleep through the night.

That's the goal. Not speed. Sanity.

Written by Fredsazy — because faster broken code is still broken.

Iria Fredrick Victor

Iria Fredrick Victor(aka Fredsazy) is a software developer, DevOps engineer, and entrepreneur. He writes about technology and business—drawing from his experience building systems, managing infrastructure, and shipping products. His work is guided by one question: "What actually works?" Instead of recycling news, Fredsazy tests tools, analyzes research, runs experiments, and shares the results—including the failures. His readers get actionable frameworks backed by real engineering experience, not theory.

Share this article:

Related posts

More from Software

May 27, 2026

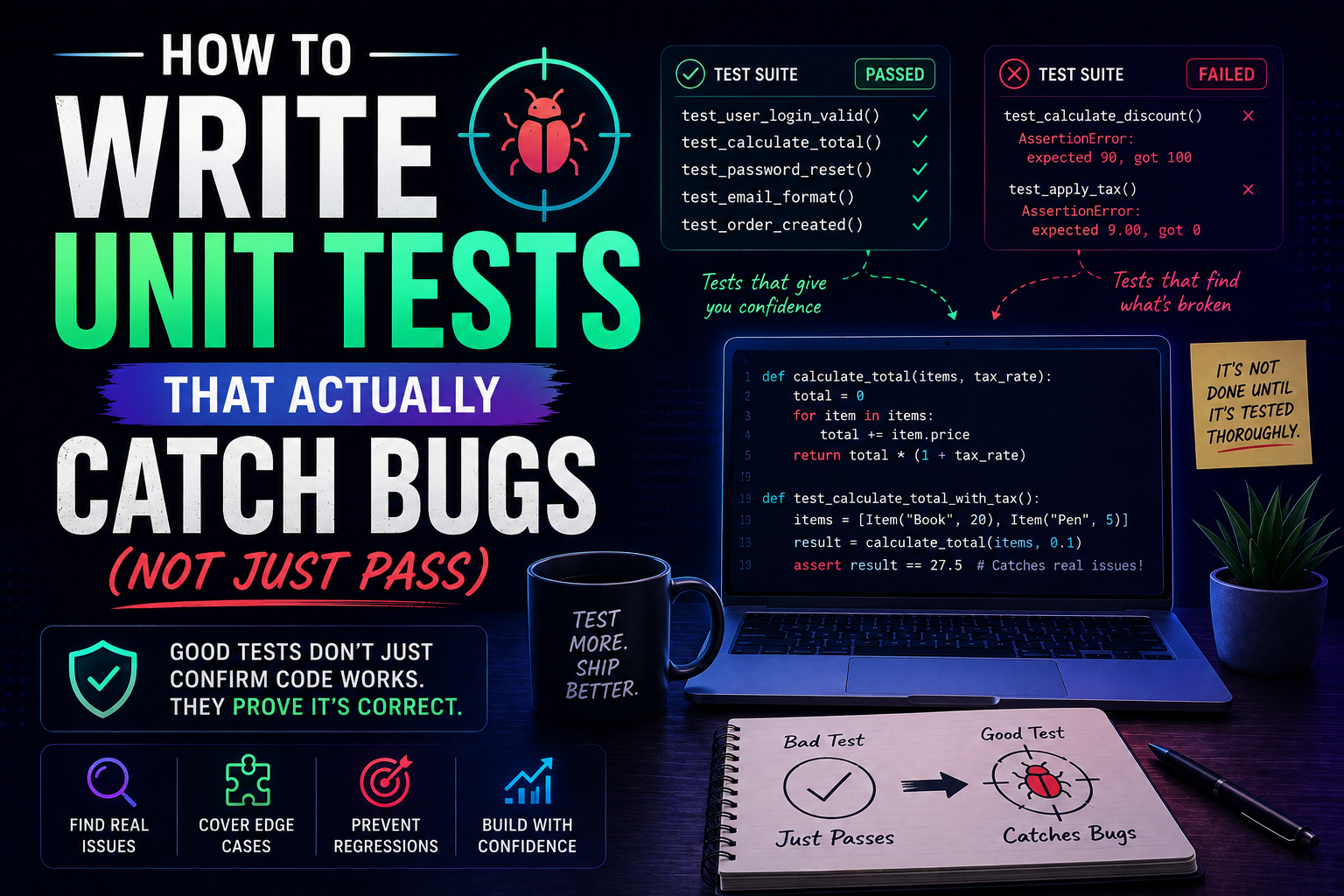

27Learn how to write unit tests that actually catch bugs — not just pass. Covers the AAA pattern, behaviour vs implementation testing, edge cases, test doubles, mutation testing, and a pre-commit checklist for writing tests that protect production.

May 22, 2026

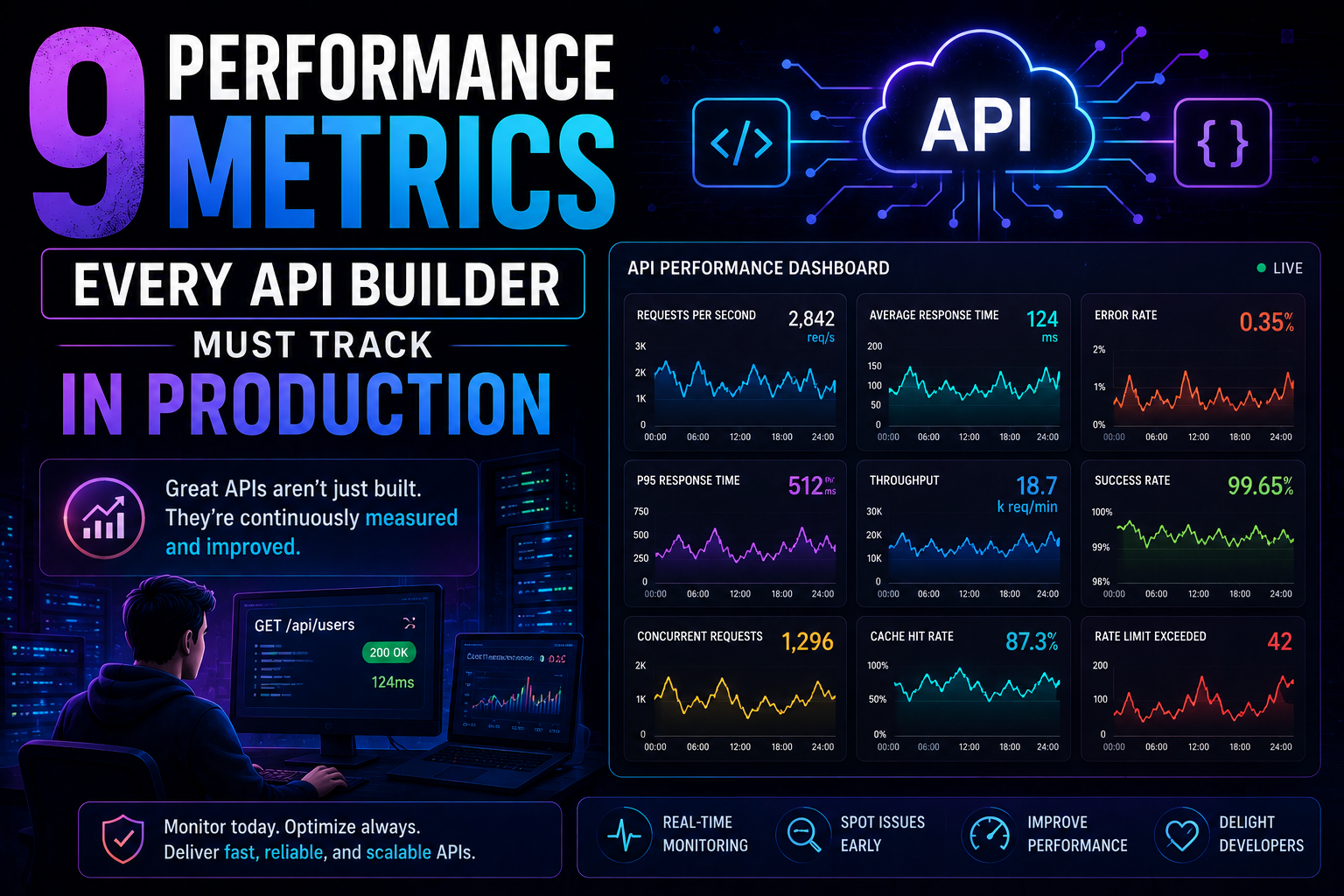

69Latency percentiles. Dependency health. Connection pool saturation. Idempotency success. 9 production metrics every API builder needs – with benchmarks, alert thresholds, and real-world failures prevented.

May 13, 2026

85PostgreSQL vs MongoDB in 2026 — an honest comparison covering benchmarks, data modelling, ACID transactions, scaling, pgvector vs Atlas Vector Search, pricing, and a decision framework for your project.